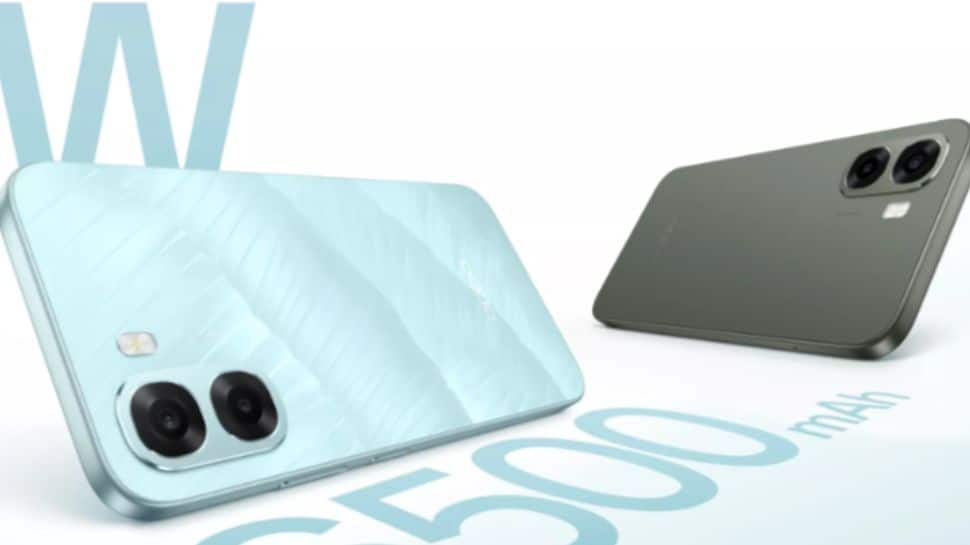

OPPO A6x 5G Price In India: Chinese smartphone maker OPPO has launched its budget-friendly OPPO A6x 5G smartphone in India. The newly launched device is the successor to the A5x 5G, which was introduced earlier this year. The OPPO A6x 5G runs on ColorOS 15, offering several new features designed to enhance the overall user experience, including the Luminous Rendering Engine. The OPPO A6x 5G comes in two elegant colour options, Ice Blue and Olive Green, giving users a stylish and refreshing look. It is available in three storage variants to suit different usage needs: 4GB RAM with 64GB storage, 4GB RAM with 128GB storage, and 6GB RAM with 128GB storage, providing a smoother performance and more space for apps and media. OPPO A6x 5G Specifications Add Zee News as a Preferred Source The smartphone sports a 6.75-inch LCD display with an HD+ resolution of 720×1,570 pixels, offering up to a 120Hz refresh rate, 256ppi pixel density, up to a 240Hz touch sampling rate, and peak brightness of up to 1,125 nits. The device is powered by an octa-core MediaTek Dimensity 6300 chipset paired with an ARM Mali-G57 MC2 GPU for smooth performance. The smartphone houses a large 6,500mAh battery that supports 45W wired SuperVOOC fast charging. On the photography front, the OPPO A6x 5G features a 13-megapixel primary rear camera with a 77-degree field of view and autofocus. For selfies and video chats, there is 5-megapixel shooter at the front, offering the same 77-degree field of view for selfies and video calls. On the connectivity front, the smartphone options include 5G, 4G LTE, Wi-Fi 5, Bluetooth 5.4, a USB Type-C port, and a 3.5mm audio jack. The handset measures 166.6×78.5×8.6mm and weighs around 212 grams. (Also Read: Vivo X300 Pro, Vivo X300 Launched In India With MediaTek Dimensity 9500 Chipset; Check Camera, Display, Battery Price, Sale And Other Specs) OPPO A6x 5G Price In India And Availability The OPPO A6x 5G comes in three variants which include the 4GB RAM with 64GB storage priced at Rs 12,499, 4GB RAM with 128GB storage priced at Rs 13,499, and 6GB RAM with 128GB storage priced at Rs 14,999. Consumers can purchase the smartphone across major online platforms such as Amazon, Flipkart, and the OPPO Store, as well as mainline retail outlets. Customers can also avail a three-month no-cost EMI option on select bank cards.

Indian Airports, Including Delhi IGI, Hit By Cyber Attack? What Is GPS Spoofing, How It Works, And Where It Is Used | Technology News

Cyber Attack On Indian Airports: The government has confirmed that several major airports, including Delhi, Mumbai, and Bengaluru, detected GPS spoofing signals last month. However, it assured that flight operations were not affected. The cyberattack raises serious concerns about aviation cybersecurity and has prompted heightened vigilance at key air travel hubs. The confirmation follows multiple reports of technical anomalies, including suspected spoofing of navigational systems, at some of the country’s busiest airports. Notably, the Ministry of Civil Aviation, along with relevant security agencies, continues to monitor the situation closely to ensure smooth air traffic operations and to implement strengthened cyber countermeasures. What Is GPS spoofing? Add Zee News as a Preferred Source GPS spoofing is a cyberattack in which attackers send fake GPS signals to a device, causing it to show the wrong location, time, or route. In simple words, it fools maps, navigation tools, and tracking apps into thinking they are somewhere else. For pilots, this can affect what they see on their screens, including the aircraft’s position and speed. This is different from GPS jamming, where signals are completely blocked, making the GPS stop working and show errors like “no signal.” How GPS Spoofing Works? GPS satellites send very weak signals to Earth, which devices use to calculate their location, speed, and time. In a GPS spoofing attack, an attacker uses special radio transmitters or software to create stronger fake GPS signals that look like the real ones. The device connects to these fake signals instead of the real satellites, causing it to show the wrong location, route, speed, or time even though it has not actually moved. GPS Spoofing: Where It is Used GPS spoofing can affect navigation and transport by misleading ships, aircraft, drones, trucks, and cars, causing them to deviate from their routes or hide their actual movements. It can also impact smartphones and apps, allowing users to fake their location in ride-hailing, gaming, financial, or social apps, sometimes to commit fraud or bypass geo-restrictions. In the field of security and defense, state or sophisticated actors may use GPS spoofing around sensitive areas to protect VIPs, conceal military activity, or disrupt enemy drones. Recent Delhi Airport And Airbus A320 Glitch Scare The government’s statement comes just weeks after more than 400 flights were delayed at Delhi airport because of a technical problem in the Air Traffic Control system. The issue was linked to the Automatic Message Switching System, which sends important flight plan data to the Auto Track System. The GPS spoofing incident also follows global flight disruptions that happened a few days earlier, caused by a software update needed for Airbus A320 airplanes.

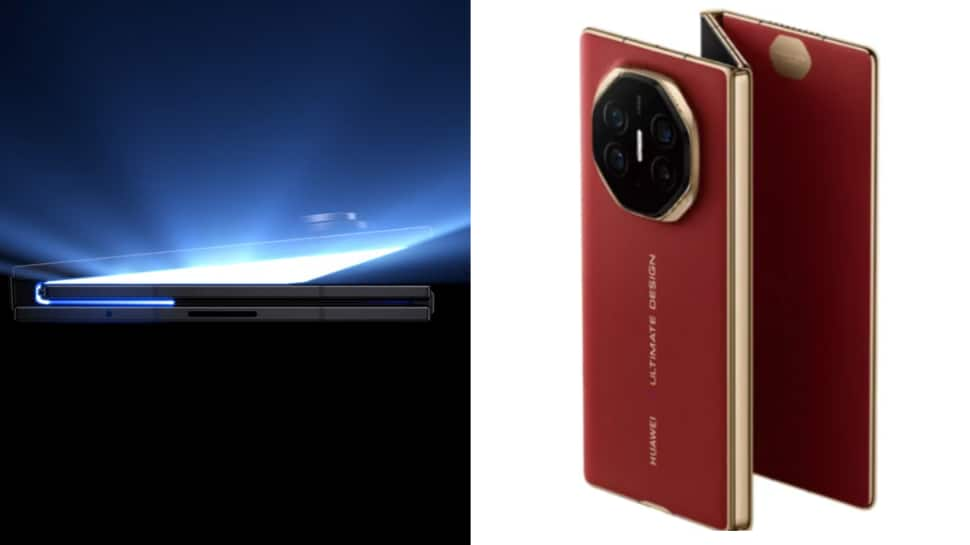

Samsung Galaxy Z TriFold vs Huawei Mate XTs: Which Triple-Foldable Wins On Camera, Display, Battery And Price? | Technology News

Samsung has taken a bold step into the next generation of foldable phones by launching the Galaxy Z TriFold. The device features a three-panel “tri-fold” design, with a 10-inch main display when fully opened and a 6.5-inch cover screen when folded. The price of the device is expected to be around 2.19 lakh. The Z TriFold runs on the Snapdragon 8 Elite “for Galaxy” chipset, paired with up to 16 GB RAM. The phone also packs a large 5,600 mAh battery. The design uses a refined dual-rail hinge system with a titanium-enclosed frame and an advanced Armour aluminium build — aimed at giving the phone better durability. Huawei’s Tri-Fold Mate XTs Add Zee News as a Preferred Source Before Samsung’s debut, Chinese manufacturer Huawei had already introduced its own tri-fold device: the Mate XTs. This is the second generation in their tri-fold line, following the original Mate XT. The price of the device starts at 2.22 lakh. The Mate XTs uses a 10.2-inch OLED LTPO display when fully unfolded, but can also be used in two-screen or regular smartphone modes — offering flexibility to switch between a tablet-like screen and a handy phone. The phone is powered by Huawei’s in-house Kirin 9020 5G chipset and runs HarmonyOS 5.1. A noticeable feature of the Mate XTs is its support for the M-Pencil stylus — beneficial for users who write notes, draw, or use the phone for creative or productivity tasks. For photography, it offers a triple rear-camera setup with a 50 MP main sensor, ultrawide and periscope telephoto lenses, and supports fast charging via 66 W wired and 50 W wireless. (Also Read: Samsung Unveils Galaxy Z TriFold, Its First Triple Folding Phone: Check Camera, Display, Battery And Price) Which one stands out? Display and versatility: Samsung Z TriFold’s 10-inch AMOLED + 6.5-inch cover screen delivers a smooth, robust experience, ideal for those who want foldable reliability and multitasking. Mate XTs offers more flexible form-factor options — smartphone mode, dual-screen, or full tablet-like screen — a compelling option for versatility. Performance and software: Samsung’s Snapdragon 8 Elite gives raw power, especially for heavy apps and multitasking. Huawei’s Kirin 9020 with HarmonyOS offers a more integrated environment, especially for stylus-based note-taking or creative tasks. Battery and charging: Both phones pack 5,600 mAh batteries. Samsung supports standard charging (45 W wired, 15 W wireless), while Huawei provides faster wired charging (66 W) and wireless charging (50 W + reverse charging), giving it a slight edge in charging flexibility. Unique features: Samsung — build quality, high-refresh-rate display, dual-SIM, and water/dust resistance. Huawei — stylus support, charging speed, adaptability of screen modes. What this mean for buyers? If you want a foldable device with a polished build, high performance, and a large, protected main screen — and are willing to pay a premium — Galaxy Z TriFold could be a good choice. On the other hand, if you value versatility (phone and tablet modes), stylus support, flexible charging, and a slightly more budget-conscious tri-fold experience — Mate XTs may appeal more. However, buying a phone depends on the individual preferences of buyers.

Vivo X300 Pro, Vivo X300 Launched In India With MediaTek Dimensity 9500 Chipset; Check Camera, Display, Battery Price, Sale And Other Specs | Technology News

Vivo X300 Series Price In India: Vivo has launched its new X300 flagship series in the Indian market, featuring the Vivo X300 and X300 Pro smartphones. The devices run on the latest OriginOS 6 based on Android 16 and are powered by a MediaTek chipset. The series was originally unveiled in China in early October, followed by a rollout in select global markets a few weeks later. Notably, the Vivo X300 lineup also supports an additional teleconverter kit. With these new models, Vivo is positioning the X300 series as direct competitors to Oppo’s Find X9 Pro and Find X9. Vivo X300 Pro Specifications: Add Zee News as a Preferred Source The smartphone features a 6.78-inch 1.5K BOE Q10+ LTPO OLED display with a 120Hz refresh rate and Circular Polarisation 2.0 for better outdoor visibility. It is powered by the MediaTek Dimensity 9500 chipset, paired with 16GB LPDDR5X RAM and 512GB UFS 4.1 storage, running on Android 16 with Vivo’s latest Indian UI. For photography, the device sports a 50MP Sony LYT-828 main sensor, a 50MP JN1 ultrawide lens, and a 200MP periscope telephoto camera with OIS, supported by Vivo’s V3+ and Vs1 dedicated imaging chips, Zeiss colour science, and an optional 2.35x Telephoto Extender. For selfies and quality video chats, there is a 50MP JN1 shooter at the front. The phone packs a 6,510mAh battery with 90W wired and 40W wireless charging. Additional features include an ultrasonic in-display fingerprint sensor, Wi-Fi 7, Bluetooth 5.4, NFC, GPS, IP68 rating, dual speakers, an Action Button, a large X-axis linear motor, a signal amplifier chip, and four Wi-Fi boosters, all in a 226g body.

Samsung Unveils Galaxy Z TriFold, Its First Triple Folding Phone: Check Camera, Display, Battery And Price | Technology News

Samsung Galaxy Z TriFold Price: South Korean tech giant Samsung has unveiled its first triple folding smartphone, the Galaxy Z TriFold, as a special edition. While this is a big step for Samsung, it is not the first company to introduce a triple folding design. Huawei released a similar device last year at a similarly high price. However, there is no information available regarding India launch. The Galaxy Z TriFold can fold in three different ways and opens into a large display. To help users fold it correctly, Samsung has added a smart alert system that shows on screen warnings and uses vibrations if the device is not being folded the right way. The phone is also very slim, measuring just 3.9mm at its thinnest point. We don’t just follow what’s next. We shape it. Introducing the Galaxy Z TriFold. Coming to Singapore soon, exclusively on https://t.co/mV7xyuMHbf. Stay tuned for more. pic.twitter.com/5tmWURA2dk — Samsung Singapore (@SamsungSG) December 2, 2025 Add Zee News as a Preferred Source Samsung Galaxy Z TriFold Specifications: The Samsung Galaxy Z TriFold comes with major upgrades, including new dual titanium hinges that keep the main folding screen fully protected when the phone is closed. It is powered by the Snapdragon 8 Elite Mobile Platform for Galaxy and unfolds into a massive 10-inch display with a resolution of 2,160 x 1,584 pixels, an adaptive refresh rate of 1 to 120Hz, and peak brightness of 1,600 nits. When opened, the 10-inch screen works like three 6.5-inch displays placed side by side. The phone also features a ceramic and glass fiber reinforced polymer back panel to prevent cracks, and Samsung says the screen closes with a very small gap. For photography, it includes a 200MP main camera, a 12MP ultra wide lens, and a 10MP telephoto lens, along with two 10MP front cameras—one on the cover display and one inside. The device packs a 5,600mAh battery with 45W fast charging and offers two storage options: 16GB RAM with 1TB storage or 16GB RAM with 512GB storage. It also carries an IP48 rating for water resistance and includes a side mounted fingerprint sensor and multiple sensors such as an accelerometer, barometer, gyro, geomagnetic sensor, hall sensor, proximity sensor, and light sensor. Samsung Galaxy Z TriFold Price And Sale Date The Samsung Galaxy Z TriFold will be available in Korea starting December 12, 2025, followed by launches in China, Taiwan, Singapore, the UAE, and the US. Buyers will also get a 6 month trial of Google AI Pro and a one time 50 percent discount on display repairs. Samsung will showcase the TriFold in select retail stores, allowing customers to try it out in person. The phone will go on sale on December 12 at a price of $2,443, which is more than double the cost of the new iPhone 17.

Who Is Amar Subramanya? Google And Microsoft Veteran Becomes Apple’s New VP Of AI | Technology News

Apple’s New Vice Pesident Of AI: Apple has announced that Bengaluru-educated artificial intelligence researcher Amar Subramanya will join the company as Vice President of AI, reporting to Craig Federighi. The company also said that John Giannandrea, Senior Vice President of Machine Learning and AI Strategy, is stepping down from his role. He will continue as an advisor until his retirement in spring 2026. Amar Subramanya’s Senior Stints at Google and Microsoft Subramanya brings extensive experience to Apple. He recently served as Corporate Vice President of AI at Microsoft and previously spent 16 years at Google, where he led engineering for the Google Gemini Assistant. Apple said his deep knowledge of artificial intelligence and machine learning, along with his ability to turn research into real products, will help drive future Apple Intelligence features. Add Zee News as a Preferred Source “Subramanya will be leading critical areas, including Apple Foundation Models, ML research, and AI Safety and Evaluation. The remaining parts of Giannandrea’s organisation will move to Sabih Khan and Eddy Cue to align more closely with similar teams,” Apple announced. “In addition to expanding his leadership team and AI responsibilities with Amar’s arrival, Craig has been instrumental in driving our AI efforts, including overseeing our work to bring a more personalised Siri to users next year,” Cook added. John Giannandrea Played a Crucial Role in AI and ML Strategy According to the iPhone maker, since joining Apple in 2018, John Giannandrea has played a key role in shaping the company’s AI and machine learning strategy. He built a world-class team and led them in developing and deploying essential AI technologies. “We are thankful for the role John played in building and advancing our AI work, helping Apple continue to innovate and enrich the lives of our users,” said Tim Cook, Apple’s CEO. AI has long been central to Apple’s vision, and “we are pleased to welcome Amar to Craig’s leadership team and bring his exceptional AI expertise to Apple,” Cook added. (Also Read: OnePlus 15R India Launch Officially Confirmed, Could Debut With Snapdragon 8 Elite Chipset; Check Expected Camera, Battery, Display, Price And Other Specs) The team is currently responsible for Apple Foundation Models, Search and Knowledge, ML Research, and AI Infrastructure. With Giannandrea’s contributions as the foundation, Federighi’s expanded oversight, and Subramanya’s deep expertise guiding the next generation of AI technologies, Apple is well positioned to accelerate its work in delivering intelligent, trusted, and deeply personal experiences. (With IANS Inputs)

Centre Orders Pre-Installed ‘Sanchar Saathi App’ On All New Handsets – Here’s Why | India News

The Central government has announced that it has asked mobile phone manufacturers and importers to ensure that the ‘Sanchar Saathi’ mobile application is pre-installed on all new mobile handsets manufactured or imported for use in India. Also Read- What Is Sanchar Saathi? Govt Makes It Mandatory On All New Smartphones: Here’s What App Does What Is The Reason Behind Centre’s Order? Add Zee News as a Preferred Source The decision has been taken with the aim of safeguarding the citizens from buying non-genuine items. It is expected to enable easy reporting of suspected misuse of telecom resources and increase the effectiveness of the ‘Sanchar Saathi’ initiative. DoT is undertaking the ‘Sanchar Saathi’ initiative for curbing the misuse of telecom resources for cyber fraud. With this move, the Centre is also aiming to strengthen telecom cybersecurity. The companies have to complete the implementation in 90 days and submit a report in 120 days. DoT’s Guidelines For Mobile Manufacturers And Importers According to the guidelines, issued on November 28, mobile manufacturers and importers have to ensure that the pre-installed Sanchar Saathi application is readily visible and accessible to the end users at the time of first use or device setup and that its functionalities are not disabled or restricted. Additionally, all such devices that have already been manufactured and are in sales channels in India, the manufacturer and importers of mobile handsets shall endeavour to push the app through software updates. What Will ‘Sanchar Saathi’ App Do? The department has developed the ‘Sanchar Saathi’ portal and app, which enables citizens to check the genuineness of a mobile handset through the IMEI number, along with other facilities like reporting suspected fraudulent communications, lost or stolen mobile handsets, checking mobile connections in their name, and trusted contact details of banks and financial institutions. In a separate statement, the DoT said that it has observed that some of the app-based communication services that are utilising Indian mobile numbers for identification of their customers or users or for provisioning or delivery of services, allow users to consume their services without the availability of the underlying Subscriber Identity Module (SIM) within the device in which the app-based services are running. This feature is being misused for cyberfraud, especially from operating outside the country. (with IANS inputs)

Fine-Tuning a BERT Model – MachineLearningMastery.com

import collections import dataclasses import functools import torch import torch.nn as nn import torch.optim as optim import tqdm from datasets import load_dataset from tokenizers import Tokenizer from torch import Tensor # BERT config and model defined previously @dataclasses.dataclass class BertConfig: “”“Configuration for BERT model.”“” vocab_size: int = 30522 num_layers: int = 12 hidden_size: int = 768 num_heads: int = 12 dropout_prob: float = 0.1 pad_id: int = 0 max_seq_len: int = 512 num_types: int = 2 class BertBlock(nn.Module): “”“One transformer block in BERT.”“” def __init__(self, hidden_size: int, num_heads: int, dropout_prob: float): super().__init__() self.attention = nn.MultiheadAttention(hidden_size, num_heads, dropout=dropout_prob, batch_first=True) self.attn_norm = nn.LayerNorm(hidden_size) self.ff_norm = nn.LayerNorm(hidden_size) self.dropout = nn.Dropout(dropout_prob) self.feed_forward = nn.Sequential( nn.Linear(hidden_size, 4 * hidden_size), nn.GELU(), nn.Linear(4 * hidden_size, hidden_size), ) def forward(self, x: Tensor, pad_mask: Tensor) -> Tensor: # self-attention with padding mask and post-norm attn_output, _ = self.attention(x, x, x, key_padding_mask=pad_mask) x = self.attn_norm(x + attn_output) # feed-forward with GeLU activation and post-norm ff_output = self.feed_forward(x) x = self.ff_norm(x + self.dropout(ff_output)) return x class BertPooler(nn.Module): “”“Pooler layer for BERT to process the [CLS] token output.”“” def __init__(self, hidden_size: int): super().__init__() self.dense = nn.Linear(hidden_size, hidden_size) self.activation = nn.Tanh() def forward(self, x: Tensor) -> Tensor: x = self.dense(x) x = self.activation(x) return x class BertModel(nn.Module): “”“Backbone of BERT model.”“” def __init__(self, config: BertConfig): super().__init__() # embedding layers self.word_embeddings = nn.Embedding(config.vocab_size, config.hidden_size, padding_idx=config.pad_id) self.type_embeddings = nn.Embedding(config.num_types, config.hidden_size) self.position_embeddings = nn.Embedding(config.max_seq_len, config.hidden_size) self.embeddings_norm = nn.LayerNorm(config.hidden_size) self.embeddings_dropout = nn.Dropout(config.dropout_prob) # transformer blocks self.blocks = nn.ModuleList([ BertBlock(config.hidden_size, config.num_heads, config.dropout_prob) for _ in range(config.num_layers) ]) # [CLS] pooler layer self.pooler = BertPooler(config.hidden_size) def forward(self, input_ids: Tensor, token_type_ids: Tensor, pad_id: int = 0, ) -> tuple[Tensor, Tensor]: # create attention mask for padding tokens pad_mask = input_ids == pad_id # convert integer tokens to embedding vectors batch_size, seq_len = input_ids.shape position_ids = torch.arange(seq_len, device=input_ids.device).unsqueeze(0) position_embeddings = self.position_embeddings(position_ids) type_embeddings = self.type_embeddings(token_type_ids) token_embeddings = self.word_embeddings(input_ids) x = token_embeddings + type_embeddings + position_embeddings x = self.embeddings_norm(x) x = self.embeddings_dropout(x) # process the sequence with transformer blocks for block in self.blocks: x = block(x, pad_mask) # pool the hidden state of the `[CLS]` token pooled_output = self.pooler(x[:, 0, :]) return x, pooled_output # Define new BERT model for question answering class BertForQuestionAnswering(nn.Module): “”“BERT model for SQuAD question answering.”“” def __init__(self, config: BertConfig): super().__init__() self.bert = BertModel(config) # Two outputs: start and end position logits self.qa_outputs = nn.Linear(config.hidden_size, 2) def forward(self, input_ids: Tensor, token_type_ids: Tensor, pad_id: int = 0, ) -> tuple[Tensor, Tensor]: # Get sequence output from BERT (batch_size, seq_len, hidden_size) seq_output, pooled_output = self.bert(input_ids, token_type_ids, pad_id=pad_id) # Project to start and end logits logits = self.qa_outputs(seq_output) # (batch_size, seq_len, 2) start_logits = logits[:, :, 0] # (batch_size, seq_len) end_logits = logits[:, :, 1] # (batch_size, seq_len) return start_logits, end_logits # Load SQuAD dataset for question answering dataset = load_dataset(“squad”) # Load the pretrained BERT tokenizer TOKENIZER_PATH = “wikitext-2_wordpiece.json” tokenizer = Tokenizer.from_file(TOKENIZER_PATH) # Setup collate function to tokenize question-context pairs for the model def collate(batch: list[dict], tokenizer: Tokenizer, max_len: int, ) -> tuple[Tensor, Tensor, Tensor, Tensor]: “”“Collate question-context pairs for the model.”“” cls_id = tokenizer.token_to_id(“[CLS]”) sep_id = tokenizer.token_to_id(“[SEP]”) pad_id = tokenizer.token_to_id(“[PAD]”) input_ids_list = [] token_type_ids_list = [] start_positions = [] end_positions = [] for item in batch: # Tokenize question and context question, context = item[“question”], item[“context”] question_ids = tokenizer.encode(question).ids context_ids = tokenizer.encode(context).ids # Build input: [CLS] question [SEP] context [SEP] input_ids = [cls_id, *question_ids, sep_id, *context_ids, sep_id] token_type_ids = [0] * (len(question_ids)+2) + [1] * (len(context_ids)+1) # Truncate or pad to max length if len(input_ids) > max_len: input_ids = input_ids[:max_len] token_type_ids = token_type_ids[:max_len] else: input_ids.extend([pad_id] * (max_len – len(input_ids))) token_type_ids.extend([1] * (max_len – len(token_type_ids))) # Find answer position in tokens: Answer may not be in the context start_pos = end_pos = 0 if len(item[“answers”][“text”]) > 0: answers = tokenizer.encode(item[“answers”][“text”][0]).ids # find the context offset of the answer in context_ids for i in range(len(context_ids) – len(answers) + 1): if context_ids[i:i+len(answers)] == answers: start_pos = i + len(question_ids) + 2 end_pos = start_pos + len(answers) – 1 break if end_pos >= max_len: start_pos = end_pos = 0 # answer is clipped, hence no answer input_ids_list.append(input_ids) token_type_ids_list.append(token_type_ids) start_positions.append(start_pos) end_positions.append(end_pos) input_ids_list = torch.tensor(input_ids_list) token_type_ids_list = torch.tensor(token_type_ids_list) start_positions = torch.tensor(start_positions) end_positions = torch.tensor(end_positions) return (input_ids_list, token_type_ids_list, start_positions, end_positions) batch_size = 16 max_len = 384 # Longer for Q&A to accommodate context collate_fn = functools.partial(collate, tokenizer=tokenizer, max_len=max_len) train_loader = torch.utils.data.DataLoader(dataset[“train”], batch_size=batch_size, shuffle=True, collate_fn=collate_fn) val_loader = torch.utils.data.DataLoader(dataset[“validation”], batch_size=batch_size, shuffle=False, collate_fn=collate_fn) # Create Q&A model with a pretrained foundation BERT model device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”) config = BertConfig() model = BertForQuestionAnswering(config) model.to(device) model.bert.load_state_dict(torch.load(“bert_model.pth”, map_location=device)) # Training setup loss_fn = nn.CrossEntropyLoss() optimizer = optim.AdamW(model.parameters(), lr=2e–5) num_epochs = 3 for epoch in range(num_epochs): model.train() # Training with tqdm.tqdm(train_loader, desc=f“Epoch {epoch+1}/{num_epochs}”) as pbar: for batch in pbar: # get batched data input_ids, token_type_ids, start_positions, end_positions = batch input_ids = input_ids.to(device) token_type_ids = token_type_ids.to(device) start_positions = start_positions.to(device) end_positions = end_positions.to(device) # forward pass start_logits, end_logits = model(input_ids, token_type_ids) # backward pass optimizer.zero_grad() start_loss = loss_fn(start_logits, start_positions) end_loss = loss_fn(end_logits, end_positions) loss = start_loss + end_loss loss.backward() optimizer.step() # update progress bar pbar.set_postfix(loss=float(loss)) pbar.update(1) # Validation: Keep track of the average loss and accuracy model.eval() val_loss, num_matches, num_batches, num_samples = 0, 0, 0, 0 with torch.no_grad(): for batch in val_loader: # get batched data input_ids, token_type_ids, start_positions, end_positions = batch input_ids = input_ids.to(device) token_type_ids = token_type_ids.to(device) start_positions = start_positions.to(device) end_positions = end_positions.to(device) # forward pass on validation data start_logits, end_logits = model(input_ids, token_type_ids) # compute loss start_loss = loss_fn(start_logits, start_positions) end_loss = loss_fn(end_logits, end_positions) loss = start_loss + end_loss val_loss += loss.item() num_batches += 1 # compute accuracy pred_start = start_logits.argmax(dim=–1) pred_end = end_logits.argmax(dim=–1) match = (pred_start == start_positions) & (pred_end == end_positions) num_matches += match.sum().item() num_samples += len(start_positions) avg_loss = val_loss / num_batches acc = num_matches / num_samples print(f“Validation {epoch+1}/{num_epochs}: acc {acc:.4f}, avg loss {avg_loss:.4f}”) # Save the fine-tuned model torch.save(model.state_dict(), f“bert_model_squad.pth”)

COAI Backs Govt’s SIM Binding Mandate For App Based Communication Services | Technology News

New Delhi: The Cellular Operators Association of India (COAI) on Monday welcomed the Department of Telecommunications’ (DoT) directive mandating Subscriber Identity Module (SIM)-binding for devices for app-based communication services, saying the move will bolster national security and curb cyber fraud. “Continuous linkage ensures complete accountability and traceability for any activity undertaken by the SIM card and its associated communication app, thereby closing long-persistent gaps that have enabled anonymity and misuse,” said Lt. Gen. Dr. S.P. Kochhar, Director General, COAI. This is a much-needed initiative in ensuring consumer trust, accountability, traceability and further alignment with evolving regulatory frameworks, the release said. The association also called on the DoT to engage the Reserve Bank of India (RBI) to mandate SMS one‑time passwords (OTP) as the primary authentication factor for all financial transactions. Add Zee News as a Preferred Source “SMS OTP continues to remain the most secure, operator verified channel with guaranteed traceability. Strengthening this requirement will create a consistent and secure authentication framework across the financial ecosystem, further reducing the risk of fraud and reinforcing consumer trust,” the statement said. App based communication services must remain continuously linked to the SIM card, which is associated with the mobile number used for identification of customers/users or for provisioning or delivery of services. The directive mandated that the user’s subscriber identity module (SIM) used at registration must be bound to the services of web-based platforms such as WhatsApp, Telegram, Signal, Arattai, Snapchat, Sharechat, and others. As the service must remain tied to the SIM in the phone, WhatsApp Web and similar web platforms are forced to log users out every six hours once the rule is implemented. Each web-based platform must submit a compliance report within four months. The change will disrupt the seamless multi‑device experience many gained by keeping WhatsApp Web running throughout the workday.

How to Speed-Up Training of Language Models

Language model training is slow, even when your model is not very large. This is because you need to train the model with a large dataset and there is a large vocabulary. Therefore, it needs many training steps for the model to converge. However, there are some techniques known to speed up the training process. In this article, you will learn about them. In particular, you will learn about: Using optimizers Using learning rate schedulers Other techniques for better convergence or reduced memory consumption Let’s get started. How to Speed-Up Training of Language ModelsPhoto by Emma Fabbri. Some rights reserved. Overview This article is divided into four parts; they are: Optimizers for Training Language Models Learning Rate Schedulers Sequence Length Scheduling Other Techniques to Help Training Deep Learning Models Optimizers for Training Language Models Adam has been the most popular optimizer for training deep learning models. Unlike SGD and RMSProp, Adam uses both the first and second moment of the gradient to update the parameters. Using the second moment can help the model converge faster and more stably, at the expense of using more memory. However, when training language models nowadays, you will usually use AdamW, the Adam optimizer with weight decay. Weight decay is a regularization technique to prevent overfitting. It usually involves adding a small penalty to the loss function. But in AdamW, the weight decay is applied directly to the weights instead. This is believed to be more stable because the regularization term is decoupled from the calculated gradient. It is also more robust to hyperparameter tuning, as the effect of the regularization term is applied explicitly to the weight update. In formula, AdamW weight update algorithm is as follows: $$\begin{aligned}g_t &= \nabla_\theta L(\theta_{t-1}) \\m_t &= \beta_1 m_{t-1} + (1 – \beta_1) g_t \\v_t &= \beta_2 v_{t-1} + (1 – \beta_2) g_t^2 \\\hat{m_t} &= m_t / (1 – \beta_1^t) \\\hat{v_t} &= v_t / (1 – \beta_2^t) \\\theta_t &= \theta_{t-1} – \alpha \Big( \frac{\hat{m_t}}{\sqrt{\hat{v_t}} + \epsilon} + \lambda \theta_{t-1} \Big)\end{aligned}$$ The model weight at step $t$ is denoted by $\theta_t$. The $g_t$ is the computed gradient from the loss function $L$, and $g_t^2$ is the elementwise square of the gradient. The $m_t$ and $v_t$ are the moving average of the first and second moment of the gradient, respectively. Learning rate $\alpha$, weight decay $\lambda$, and moving average decay rates $\beta_1$ and $\beta_2$ are hyperparameters. A small value $\epsilon$ is used to avoid division by zero. A common choice would be $\beta_1 = 0.9$, $\beta_2 = 0.999$, $\epsilon = 10^{-8}$, and $\lambda = 0.1$. The key of AdamW is the $\lambda \theta_{t-1}$ term in the gradient update, instead of in the loss function. AdamW is not the only choice of optimizer. Some newer optimizers have been proposed recently, such as Lion, SOAP, and AdEMAMix. You can see the paper Benchmarking Optimizers for Large Language Model Pretraining for a summary. Learning Rate Schedulers A learning rate scheduler is used to adjust the learning rate during training. Usually, you would prefer a larger learning rate for the early training steps and reduce the learning rate as training progresses to help the model converge. You can add a warm-up period to increase the learning rate from a small value to the peak over a short period (usually 0.1% to 2% of total steps), then the learning rate is decreased over the remaining training steps. A warm-up period usually starts with a near-zero learning rate and increases linearly to the peak learning rate. A model starts with randomized initial weights. Starting with a large learning rate can cause poor convergence, especially for big models, large batches, and adaptive optimizers. You can see the need for warm-up from the equations above. Assume the model is uncalibrated; the loss may vary greatly between subsequent steps. Then the first and second moments $m_t$ and $v_t$ will be fluctuating greatly, and the gradient update $\theta_t – \theta_{t-1}$ will also be fluctuating greatly. Hence, you would prefer the loss to be stable and move slowly so that AdamW can build a reliable running average. This can be easily achieved if $\alpha$ is small. At the learning rate reduction phase, there are a few choices: cosine decay: $LR = LR_{\max} \cdot \frac12 \Big(1 + \cos \frac{\pi t}{T}\Big)$ square-root decay: $LR = LR_{\max} \cdot \sqrt{\frac{T – t}{T}}$ linear decay: $LR = LR_{\max} \cdot \frac{T – t}{T}$ Plot of the three decay functions A large learning rate can help the model converge faster while a small learning rate can help the model stabilize. Therefore, you want the learning rate to be large at the beginning when the model is still uncalibrated, but small at the end when the model is close to its optimal state. All decay schemes above can achieve this, but you would not want the learning rate to become “too small too soon” or “too large too late”. Cosine decay is the most popular choice because it drops the learning rate more slowly at the beginning and stays longer at a low learning rate near the end, which are desirable properties to help the model converge faster and stabilize respectively. n PyTorch, you have the CosineAnnealingLR scheduler to implement cosine decay. For the warm-up period, you need to combine with the LinearLR scheduler. Below is an example of the training loop using AdamW, CosineAnnealingLR, and LinearLR: import torch import torch.nn as nn import torch.optim as optim from torch.optim.lr_scheduler import LinearLR, CosineAnnealingLR, SequentialLR # Example setup model = torch.nn.Linear(10, 1) X, y = torch.randn(5, 10), torch.randn(5) loss_fn = nn.MSELoss() optimizer = optim.AdamW(model.parameters(), lr=1e-2, betas=(0.9, 0.999), eps=1e-8, weight_decay=0.1) # Define learning rate schedulers warmup_steps = 10 total_steps = 100 min_lr = 1e-4 warmup_lr = LinearLR(optimizer, start_factor=0.1, end_factor=1.0, total_iters=warmup_steps) cosine_lr = CosineAnnealingLR(optimizer, T_max=total_steps – warmup_steps, eta_min=min_lr) combined_lr = SequentialLR(optimizer, schedulers=[warmup_lr, cosine_lr], milestones=[warmup_steps]) # Training loop for step in range(total_steps): # train one epoch y_pred = model(X) loss = loss_fn(y_pred, y) # print loss and learning rate print(f”Step {step+1}/{total_steps}: loss {loss.item():.4f}, lr {combined_lr.get_last_lr()[0]:.4f}”) # backpropagate and update weights optimizer.zero_grad()