OnePlus 15 India Launch: Chinese smartphone manufacturer OnePlus is set to launch the OnePlus 15 smartphone in India today. It is one of the most anticipated smartphones from the leading tech player is being unleashed tonight at 7 PM IST. Apart from the OnePlus 15, the company might also introduce new accessories which will come bundled for the Indian users exclusively in the market. Notably, the company has said that the OnePlus 15 smartphone will go on sale at 8 PM IST on November 13 itself. OnePlus 15 India Launch: When And Where To Watch Live Fans and tech enthusiasts can easily catch the live stream on the OnePlus India YouTube channel at 7PM IST, where the company will reveal key details about the device which include its final price, camera specifications, battery, variants, availability, launch offers, and more. Add Zee News as a Preferred Source OnePlus 15 India Launch: Specifications (Expected) The OnePlus 15 is expected to feature a stunning 6.78-inch BOE Flexible AMOLED LTPO display with a 1.5K resolution and a super-smooth 165Hz refresh rate. It supports up to 1,800 nits of peak brightness and a 100% DCI-P3 colour gamut, ensuring vivid and lifelike visuals even under bright sunlight. The smartphone is expected to feature a Qualcomm’s latest Snapdragon 8 Elite Gen 5 chipset, built on an efficient 3nm process for exceptional performance and power efficiency. It will run on Android 16-based OxygenOS 16 in India, offering users a clean, fast, and refined software experience. On the photography front, the smartphone is expected to boast a triple 50MP setup, led by a Sony sensor for the main lens, accompanied by an ultra-wide shooter and a 3.5x telephoto lens for detailed zoom photography. For selfies and video chats, the 32MP front camera will support 4K video recording at 60fps — a first for any OnePlus front shooter. (Also Read: iQOO 15 Set To Launch In India On November 26: Price, Features, And Pre-Booking Details Revealed) OnePlus 15 India Launch: Price And Launch Offer (Expected) The OnePlus 15 is expected to be the brand’s most expensive non-foldable flagship yet, surpassing the OnePlus 13, which launched in India at Rs 69,999. However, customers can look forward to launch offers that may reduce the effective price, such as trade-in benefits of up to Rs 4,000 and a bundle deal with OnePlus Nord Buds, available starting November 13.

Nine In 10 Indian SMBs Bet On AI As Tier 2 Cities Lead Adoption: Report | Technology News

New Delhi: Nine in 10 Indian small and medium businesses (SMBs) are already investing in or planning AI adoption, with 92 per cent of them expecting business growth in the next 12 months, a report said on Thursday. The report from career portal LinkedIn research, commissioned by YouGov, said that SMBs in some of the fastest growing cities in India led in AI adoption such as Chandigarh (100 per cent), Jaipur (94 per cent), and Ahmedabad (94 per cent). SMBs are “not just hopeful but rebuilding around smarter systems, skilled talent, and trusted digital platforms,” the report mentioned. The survey of 1,027 office holders in SMB and MSMEs across major Indian cities found 57 per cent view AI and automation as essential to stay competitive, 54 per cent cite operational efficiency as key to protecting margins, and 51 per cent mentioned digital transformation as critical to survival. Nearly all surveyed businesses use AI to automate workflows (92 per cent) and strengthen analytics (93 per cent), while over 90 per cent use it to streamline hiring, marketing and sales, the report noted. Add Zee News as a Preferred Source “Indian SMBs are reimagining how business is built, using AI to drive efficiency, skills-first hiring to build capability, and trusted digital ecosystems to expand their market footprint,” said Kumaresh Pattabiraman, Country Manager, LinkedIn India. SMBs in Delhi (61 per cent) and Pune (60 per cent) lead AI adoption to manage costs, while SMBs in Bengaluru (63 per cent) focused on operational efficiency and those in Chennai (62 per cent) concentrated on building a skilled talent pipeline. The hiring priorities across SMBs have shifted to digital literacy and AI fluency with 63 per cent of them prioritising it. Problem‑solving (57 per cent) and data analysis (50 per cent) scored over traditional qualifications.

Battery Saving Tips: 5 Smart Settings To Stop Your Phone Battery From Draining Fast | Technology News

Battery saving tips: In today’s digital age, smartphones have become an essential part of our daily lives, from communication and entertainment to work and navigation. However, one common problem that users face is battery draining. While modern smartphones come with powerful batteries, several background settings can quietly consume power and shorten your battery life. Here are five important settings you can adjust to make your phone last longer on a single charge. Lower Screen Brightness and Timeout The display is one of the biggest battery consumers on any smartphone. High screen brightness and long screen-on time can quickly drain your battery. Add Zee News as a Preferred Source To save power, reduce the brightness level manually or enable auto-brightness so that the phone adjusts it based on lighting conditions. Also, reduce the screen timeout duration to 15 or 30 seconds, so the display turns off quickly when not in use. This simple change can extend your battery life significantly throughout the day. Turn Off Location Services When Not Needed Location services or GPS tracking are necessary for navigation and delivery apps, but they also use a lot of battery when running in the background. Go to your settings and disable location access for apps that don’t need it all the time. You can choose the option “Allow only while using the app” instead of “Always allow.” For even more savings, turn off GPS completely when you don’t need it. This small habit can help conserve a noticeable amount of battery power. (Also Read: What’s New In ChatGPT 5.1? Hidden Features And Human-Like Conversation Capabilities Revealed) Manage Background App Refresh and Notifications Many apps continue to refresh content in the background even when you’re not using them. Social media, email, and messaging apps are the most common examples. To reduce battery drain, go to your phone’s settings and limit background app refresh. You can either turn it off completely or allow only essential apps to refresh. Disabling unnecessary app notifications will save your battery draining to a good extent. Use Battery Saver or Power Saving Mode Almost every smartphone now comes with a built-in battery saver or power saving mode. When enabled, this feature automatically reduces system performance, limits background data, and dims the display to help your phone last longer. You can manually activate this mode when your battery drops below a certain percentage or set it to turn on automatically. It’s one of the most effective tools to extend battery life, especially during long days or travel. (Also Read: Why Do Clocks Make Tick-Tick Sound Only? Know The Reason Behind It) Disable Connectivity Features You’re Not Using Wi-Fi, Bluetooth, mobile data, and hotspot functions consume a large amount of power when left on unnecessarily. If you’re not using them, it’s best to switch them off. For instance, turn off Bluetooth after disconnecting headphones, or disable Wi-Fi when you’re on the move and not connected to a known network. Airplane Mode can also be useful when you’re in an area with weak signals, as the phone uses more energy trying to connect. Final Tip By making these small adjustments, you can prevent your phone battery from draining quickly and enjoy longer use without constantly reaching for the charger. Regularly checking your battery settings and app usage can help you identify which features consume the most power — giving you better control and efficiency over your device.

Expert-Level Feature Engineering: Advanced Techniques for High-Stakes Models

In this article, you will learn three expert-level feature engineering strategies — counterfactual features, domain-constrained representations, and causal-invariant features — for building robust and explainable models in high-stakes settings. Topics we will cover include: How to generate counterfactual sensitivity features for decision-boundary awareness. How to train a constrained autoencoder that encodes a monotonic domain rule into its representation. How to discover causal-invariant features that remain stable across environments. Without further delay, let’s begin. Expert-Level Feature Engineering: Advanced Techniques for High-Stakes ModelsImage by Editor Introduction Building machine learning models in high-stakes contexts like finance, healthcare, and critical infrastructure often demands robustness, explainability, and other domain-specific constraints. In these situations, it can be worth going beyond classic feature engineering techniques and adopting advanced, expert-level strategies tailored to such settings. This article presents three such techniques, explains how they work, and highlights their practical impact. Counterfactual Feature Generation Counterfactual feature generation comprises techniques that quantify how sensitive predictions are to decision boundaries by constructing hypothetical data points from minimal changes to original features. The idea is simple: ask “how much must an original feature value change for the model’s prediction to cross a critical threshold?” These derived features improve interpretability — e.g. “how close is a patient to a diagnosis?” or “what is the minimum income increase required for loan approval?”— and they encode sensitivity directly in feature space, which can improve robustness. The Python example below creates a counterfactual sensitivity feature, cf_delta_feat0, measuring how much input feature feat_0 must change (holding all others fixed) to cross the classifier’s decision boundary. We’ll use NumPy, pandas, and scikit-learn. import numpy as np import pandas as pd from sklearn.linear_model import LogisticRegression from sklearn.datasets import make_classification from sklearn.preprocessing import StandardScaler # Toy data and baseline linear classifier X, y = make_classification(n_samples=500, n_features=5, random_state=42) df = pd.DataFrame(X, columns=[f”feat_{i}” for i in range(X.shape[1])]) df[‘target’] = y scaler = StandardScaler() X_scaled = scaler.fit_transform(df.drop(columns=”target”)) clf = LogisticRegression().fit(X_scaled, y) # Decision boundary parameters weights = clf.coef_[0] bias = clf.intercept_[0] def counterfactual_delta_feat0(x, eps=1e-9): “”” Minimal change to feature 0, holding other features fixed, required to move the linear logit score to the decision boundary (0). For a linear model: delta = -score / w0 “”” score = np.dot(weights, x) + bias w0 = weights[0] return -score / (w0 + eps) df[‘cf_delta_feat0’] = [counterfactual_delta_feat0(x) for x in X_scaled] df.head() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 import numpy as np import pandas as pd from sklearn.linear_model import LogisticRegression from sklearn.datasets import make_classification from sklearn.preprocessing import StandardScaler # Toy data and baseline linear classifier X, y = make_classification(n_samples=500, n_features=5, random_state=42) df = pd.DataFrame(X, columns=[f“feat_{i}” for i in range(X.shape[1])]) df[‘target’] = y scaler = StandardScaler() X_scaled = scaler.fit_transform(df.drop(columns=“target”)) clf = LogisticRegression().fit(X_scaled, y) # Decision boundary parameters weights = clf.coef_[0] bias = clf.intercept_[0] def counterfactual_delta_feat0(x, eps=1e–9): “”“ Minimal change to feature 0, holding other features fixed, required to move the linear logit score to the decision boundary (0). For a linear model: delta = -score / w0 ““” score = np.dot(weights, x) + bias w0 = weights[0] return –score / (w0 + eps) df[‘cf_delta_feat0’] = [counterfactual_delta_feat0(x) for x in X_scaled] df.head() Domain-Constrained Representation Learning (Constrained Autoencoders) Autoencoders are widely used for unsupervised representation learning. We can adapt them for domain-constrained representation learning: learn a compressed representation (latent features) while enforcing explicit domain rules (e.g., safety margins or monotonicity laws). Unlike unconstrained latent factors, domain-constrained representations are trained to respect physical, ethical, or regulatory constraints. Below, we train an autoencoder that learns three latent features and reconstructs inputs while softly enforcing a monotonic rule: higher values of feat_0 should not decrease the likelihood of the positive label. We add a simple supervised predictor head and penalize violations via a finite-difference monotonicity loss. Implementation uses PyTorch. import torch import torch.nn as nn import torch.optim as optim from sklearn.model_selection import train_test_split # Supervised split using the earlier DataFrame `df` X_train, X_val, y_train, y_val = train_test_split( df.drop(columns=”target”).values, df[‘target’].values, test_size=0.2, random_state=42 ) X_train = torch.tensor(X_train, dtype=torch.float32) y_train = torch.tensor(y_train, dtype=torch.float32).unsqueeze(1) torch.manual_seed(42) class ConstrainedAutoencoder(nn.Module): def __init__(self, input_dim, latent_dim=3): super().__init__() self.encoder = nn.Sequential( nn.Linear(input_dim, 8), nn.ReLU(), nn.Linear(8, latent_dim) ) self.decoder = nn.Sequential( nn.Linear(latent_dim, 8), nn.ReLU(), nn.Linear(8, input_dim) ) # Small predictor head on top of the latent code (logit output) self.predictor = nn.Linear(latent_dim, 1) def forward(self, x): z = self.encoder(x) recon = self.decoder(z) logit = self.predictor(z) return recon, z, logit model = ConstrainedAutoencoder(input_dim=X_train.shape[1]) optimizer = optim.Adam(model.parameters(), lr=1e-3) recon_loss_fn = nn.MSELoss() pred_loss_fn = nn.BCEWithLogitsLoss() epsilon = 1e-2 # finite-difference step for monotonicity on feat_0 for epoch in range(50): model.train() optimizer.zero_grad() recon, z, logit = model(X_train) # Reconstruction + supervised prediction loss loss_recon = recon_loss_fn(recon, X_train) loss_pred = pred_loss_fn(logit, y_train) # Monotonicity penalty: y_logit(x + e*e0) – y_logit(x) should be >= 0 X_plus = X_train.clone() X_plus[:, 0] = X_plus[:, 0] + epsilon _, _, logit_plus = model(X_plus) mono_violation = torch.relu(logit – logit_plus) # negative slope if > 0 loss_mono = mono_violation.mean() loss = loss_recon + 0.5 * loss_pred + 0.1 * loss_mono loss.backward() optimizer.step() # Latent features now reflect the monotonic constraint with torch.no_grad(): _, latent_feats, _ = model(X_train) latent_feats[:5] 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 import torch import torch.nn as nn import torch.optim as optim from sklearn.model_selection import train_test_split # Supervised split using the earlier DataFrame `df` X_train, X_val, y_train, y_val = train_test_split( df.drop(columns=“target”).values, df[‘target’].values, test_size=0.2, random_state=42 ) X_train = torch.tensor(X_train, dtype=torch.float32) y_train = torch.tensor(y_train, dtype=torch.float32).unsqueeze(1) torch.manual_seed(42) class ConstrainedAutoencoder(nn.Module): def __init__(self, input_dim, latent_dim=3):

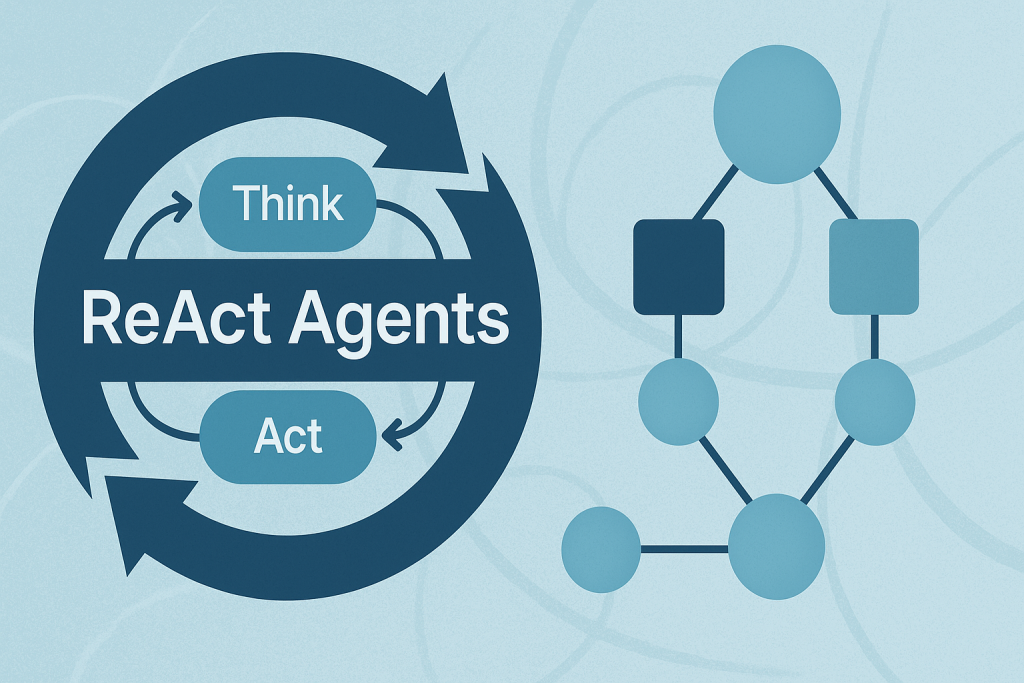

Building ReAct Agents with LangGraph: A Beginner’s Guide

In this article, you will learn how the ReAct (Reasoning + Acting) pattern works and how to implement it with LangGraph — first with a simple, hardcoded loop and then with an LLM-driven agent. Topics we will cover include: The ReAct cycle (Reason → Act → Observe) and why it’s useful for agents. How to model agent workflows as graphs with LangGraph. Building a hardcoded ReAct loop, then upgrading it to an LLM-powered version. Let’s explore these techniques. Building ReAct Agents with LangGraph: A Beginner’s GuideImage by Author What is the ReAct Pattern? ReAct (Reasoning + Acting) is a common pattern for building AI agents that think through problems and take actions to solve them. The pattern follows a simple cycle: Reasoning: The agent thinks about what it needs to do next. Acting: The agent takes an action (like searching for information). Observing: The agent examines the results of its action. This cycle repeats until the agent has gathered enough information to answer the user’s question. Why LangGraph? LangGraph is a framework built on top of LangChain that lets you define agent workflows as graphs. A graph (in this context) is a data structure consisting of nodes (steps in your process) connected by edges (the paths between steps). Each node in the graph represents a step in your agent’s process, and edges define how information flows between steps. This structure allows for complex flows like loops and conditional branching. For example, your agent can cycle between reasoning and action nodes until it gathers enough information. This makes complex agent behavior easy to understand and maintain. Tutorial Structure We’ll build two versions of a ReAct agent: Part 1: A simple hardcoded agent to understand the mechanics. Part 2: An LLM-powered agent that makes dynamic decisions. Part 1: Understanding ReAct with a Simple Example First, we’ll create a basic ReAct agent with hardcoded logic. This helps you understand how the ReAct loop works without the complexity of LLM integration. Setting Up the State Every LangGraph agent needs a state object that flows through the graph nodes. This state serves as shared memory that accumulates information. Nodes read the current state and add their contributions before passing it along. from langgraph.graph import StateGraph, END from typing import TypedDict, Annotated import operator # Define the state that flows through our graph class AgentState(TypedDict): messages: Annotated[list, operator.add] next_action: str iterations: int from langgraph.graph import StateGraph, END from typing import TypedDict, Annotated import operator # Define the state that flows through our graph class AgentState(TypedDict): messages: Annotated[list, operator.add] next_action: str iterations: int Key Components: StateGraph: The main class from LangGraph that defines our agent’s workflow. AgentState: A TypedDict that defines what information our agent tracks. messages: Uses operator.add to accumulate all thoughts, actions, and observations. next_action: Tells the graph which node to execute next. iterations: Counts how many reasoning cycles we’ve completed. Creating a Mock Tool In a real ReAct agent, tools are functions that perform actions in the world — like searching the web, querying databases, or calling APIs. For this example, we’ll use a simple mock search tool. # Simple mock search tool def search_tool(query: str) -> str: # Simulate a search – in real usage, this would call an API responses = { “weather tokyo”: “Tokyo weather: 18°C, partly cloudy”, “population japan”: “Japan population: approximately 125 million”, } return responses.get(query.lower(), f”No results found for: {query}”) # Simple mock search tool def search_tool(query: str) -> str: # Simulate a search – in real usage, this would call an API responses = { “weather tokyo”: “Tokyo weather: 18°C, partly cloudy”, “population japan”: “Japan population: approximately 125 million”, } return responses.get(query.lower(), f“No results found for: {query}”) This function simulates a search engine with hardcoded responses. In production, this would call a real search API like Google, Bing, or a custom knowledge base. The Reasoning Node — The “Brain” of ReAct This is where the agent thinks about what to do next. In this simple version, we’re using hardcoded logic, but you’ll see how this becomes dynamic with an LLM in Part 2. # Reasoning node – decides what to do def reasoning_node(state: AgentState): messages = state[“messages”] iterations = state.get(“iterations”, 0) # Simple logic: first search weather, then population, then finish if iterations == 0: return {“messages”: [“Thought: I need to check Tokyo weather”], “next_action”: “action”, “iterations”: iterations + 1} elif iterations == 1: return {“messages”: [“Thought: Now I need Japan’s population”], “next_action”: “action”, “iterations”: iterations + 1} else: return {“messages”: [“Thought: I have enough info to answer”], “next_action”: “end”, “iterations”: iterations + 1} # Reasoning node – decides what to do def reasoning_node(state: AgentState): messages = state[“messages”] iterations = state.get(“iterations”, 0) # Simple logic: first search weather, then population, then finish if iterations == 0: return {“messages”: [“Thought: I need to check Tokyo weather”], “next_action”: “action”, “iterations”: iterations + 1} elif iterations == 1: return {“messages”: [“Thought: Now I need Japan’s population”], “next_action”: “action”, “iterations”: iterations + 1} else: return {“messages”: [“Thought: I have enough info to answer”], “next_action”: “end”, “iterations”: iterations + 1} How it works: The reasoning node examines the current state and decides: Should we gather more information? (return “action”) Do we have enough to answer? (return “end”) Notice how each return value updates the state: Adds a “Thought” message explaining the decision. Sets next_action to route to the next node. Increments the iteration counter. This mimics how a human would approach a research task: “First I need weather info, then population data, then I can answer.” The Action Node — Taking Action Once the reasoning node decides to act, this node executes the chosen action and observes the results. # Action node – executes the tool def action_node(state: AgentState): iterations = state[“iterations”] # Choose query based on iteration query = “weather tokyo” if iterations == 1 else “population japan” result = search_tool(query) return {“messages”: [f”Action: Searched for ‘{query}’”, f”Observation: {result}”], “next_action”: “reasoning”} # Router – decides next step def route(state: AgentState):

Datasets for Training a Language Model

A language model is a mathematical model that describes a human language as a probability distribution over its vocabulary. To train a deep learning network to model a language, you need to identify the vocabulary and learn its probability distribution. You can’t create the model from nothing. You need a dataset for your model to learn from. In this article, you’ll learn about datasets used to train language models and how to source common datasets from public repositories. Let’s get started. Datasets for Training a Language ModelPhoto by Dan V. Some rights reserved. A Good Dataset for Training a Language Model A good language model should learn correct language usage, free of biases and errors. Unlike programming languages, human languages lack formal grammar and syntax. They evolve continuously, making it impossible to catalog all language variations. Therefore, the model should be trained from a dataset instead of crafted from rules. Setting up a dataset for language modeling is challenging. You need a large, diverse dataset that represents the language’s nuances. At the same time, it must be high quality, presenting correct language usage. Ideally, the dataset should be manually edited and cleaned to remove noise like typos, grammatical errors, and non-language content such as symbols or HTML tags. Creating such a dataset from scratch is costly, but several high-quality datasets are freely available. Common datasets include: Common Crawl. A massive, continuously updated dataset of over 9.5 petabytes with diverse content. It’s used by leading models including GPT-3, Llama, and T5. However, since it’s sourced from the web, it contains low-quality and duplicate content, along with biases and offensive material. Rigorous cleaning and filtering are required to make it useful. C4 (Colossal Clean Crawled Corpus). A 750GB dataset scraped from the web. Unlike Common Crawl, this dataset is pre-cleaned and filtered, making it easier to use. Still, expect potential biases and errors. The T5 model was trained on this dataset. Wikipedia. English content alone is around 19GB. It is massive yet manageable. It’s well-curated, structured, and edited to Wikipedia standards. While it covers a broad range of general knowledge with high factual accuracy, its encyclopedic style and tone are very specific. Training on this dataset alone may cause models to overfit to this style. WikiText. A dataset derived from verified good and featured Wikipedia articles. Two versions exist: WikiText-2 (2 million words from hundreds of articles) and WikiText-103 (100 million words from 28,000 articles). BookCorpus. A few-GB dataset of long-form, content-rich, high-quality book texts. Useful for learning coherent storytelling and long-range dependencies. However, it has known copyright issues and social biases. The Pile. An 825GB curated dataset from multiple sources, including BookCorpus. It mixes different text genres (books, articles, source code, and academic papers), providing broad topical coverage designed for multidisciplinary reasoning. However, this diversity results in variable quality, duplicate content, and inconsistent writing styles. Getting the Datasets You can search for these datasets online and download them as compressed files. However, you’ll need to understand each dataset’s format and write custom code to read them. Alternatively, search for datasets in the Hugging Face repository at https://huggingface.co/datasets. This repository provides a Python library that lets you download and read datasets in real time using a standardized format. Hugging Face Datasets Repository Let’s download the WikiText-2 dataset from Hugging Face, one of the smallest datasets suitable for building a language model: import random from datasets import load_dataset dataset = load_dataset(“wikitext”, “wikitext-2-raw-v1″) print(f”Size of the dataset: {len(dataset)}”) # print a few samples n = 5 while n > 0: idx = random.randint(0, len(dataset)-1) text = dataset[idx][“text”].strip() if text and not text.startswith(“=”): print(f”{idx}: {text}”) n -= 1 import random from datasets import load_dataset dataset = load_dataset(“wikitext”, “wikitext-2-raw-v1”) print(f“Size of the dataset: {len(dataset)}”) # print a few samples n = 5 while n > 0: idx = random.randint(0, len(dataset)–1) text = dataset[idx][“text”].strip() if text and not text.startswith(“=”): print(f“{idx}: {text}”) n -= 1 The output may look like this: Size of the dataset: 36718 31776: The Missouri ‘s headwaters above Three Forks extend much farther upstream than … 29504: Regional variants of the word Allah occur in both pagan and Christian pre @-@ … 19866: Pokiri ( English : Rogue ) is a 2006 Indian Telugu @-@ language action film , … 27397: The first flour mill in Minnesota was built in 1823 at Fort Snelling as a … 10523: The music industry took note of Carey ‘s success . She won two awards at the … Size of the dataset: 36718 31776: The Missouri ‘s headwaters above Three Forks extend much farther upstream than … 29504: Regional variants of the word Allah occur in both pagan and Christian pre @-@ … 19866: Pokiri ( English : Rogue ) is a 2006 Indian Telugu @-@ language action film , … 27397: The first flour mill in Minnesota was built in 1823 at Fort Snelling as a … 10523: The music industry took note of Carey ‘s success . She won two awards at the … If you haven’t already, install the Hugging Face datasets library: When you run this code for the first time, load_dataset() downloads the dataset to your local machine. Ensure you have enough disk space, especially for large datasets. By default, datasets are downloaded to ~/.cache/huggingface/datasets. All Hugging Face datasets follow a standard format. The dataset object is an iterable, with each item as a dictionary. For language model training, datasets typically contain text strings. In this dataset, text is stored under the “text” key. The code above samples a few elements from the dataset. You’ll see plain text strings of varying lengths. Post-Processing the Datasets Before training a language model, you may want to post-process the dataset to clean the data. This includes reformatting text (clipping long strings, replacing multiple spaces with single spaces), removing non-language content (HTML tags, symbols), and removing unwanted characters (extra spaces around punctuation). The specific processing depends on the dataset and how you want to present text to the model. For example, if

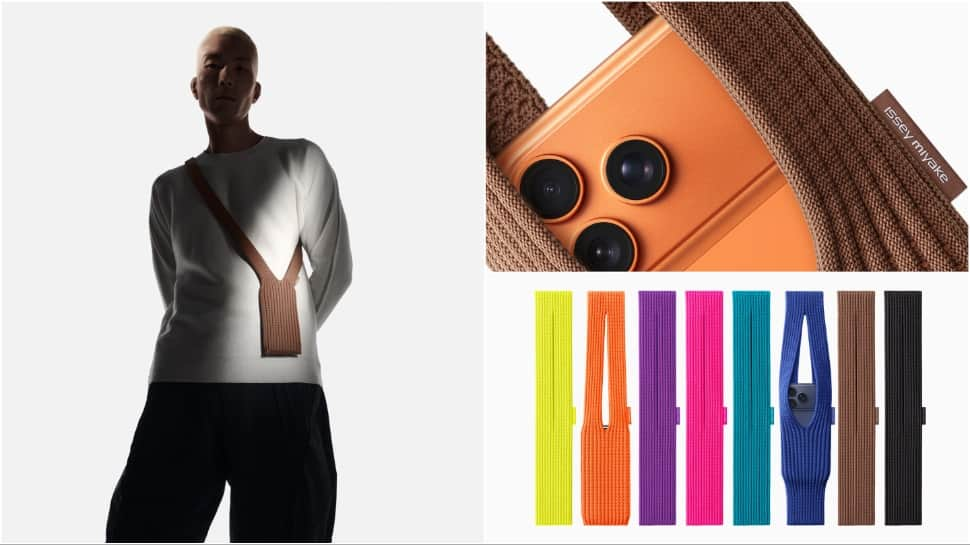

VIRAL: Apple’s Limited-Edition ‘iPhone Pocket’ Accessory Brutally Mocked Over Rs 20,400 Price Tag | viral News

Apple recently announced the “iPhone Pocket,” a limited-edition accessory developed in cooperation with ISSEY MIYAKE, which quickly set off a storm of social media ridicule regarding its high price and unusual design. The accessory, described by the company as a “singular 3D-knitted construction,” comes in two variants priced between around Rs 13,310 and Rs 20,400. The pricey accessory has been compared to nothing but a plain, cut-up sock by many social media users, or at best, a fabric crossbody bag. Social Media Backlash/Memes Add Zee News as a Preferred Source The prices, varying from Rs 13,310 for a short strap to Rs 20,400 for a long strap, were the source of much ridicule online. One user exclaimed, “The iPhone pocket everybody. Rs 20,400 for a cut-up sock. Apple people will pay anything for anything as long as it’s Apple.” Another user shared a picture and wrote, “This sock or ‘iPhone Pocket’ is for Rs 20,400,” while another user commented wryly on the price: “iPhone Pocket: Rs 13,310. Your gym sock: Rs 12.” TWO hundred and thirty dollars This feels like a litmus test for people who will buy/defend anything Apple releases pic.twitter.com/hSAaJXGAOn — Marques Brownlee (@MKBHD) November 11, 2025 The reaction was everywhere, with many memes popping up; many featured pictures of the iPhone Pocket photoshopped to include googly eyes. Design And Pricing Details The iPhone Pocket, in collaboration with ISSEY MIYAKE, takes its inspiration from “the concept of a piece of cloth” and is crafted in Japan. Construction: The accessory is described as a “singular 3D-knitted construction designed to fit any iPhone.” Versions and Pricing: Short Strap: Costs Rs 13,310 (approx.), comes in eight colours including lemon, mandarin, purple, and black. Long Strap: Rs 20,400, it is available in three colours: sapphire, cinnamon, and black. Design Philosophy: Yoshiyuki Miyamae, design director of MIYAKE DESIGN STUDIO, described the concept: “iPhone Pocket explores the concept of “the joy of wearing iPhone in your own way.” The simplicity of its design echoes what we practice at ISSEY MIYAKE — the idea of leaving things less defined to allow for possibilities and personal interpretation.” Availability The special-edition iPhone Pocket will be available to purchase from Friday, November 14, in select Apple Store locations in the USA, and globally on apple.com in France, Greater China, Italy, Japan, Singapore, South Korea, the UK, and the US. ALSO READ | WATCH: China’s Newly Opened Hongqi Bridge Collapses In Massive Landslide; Viral Video

Google Nano Banana 2 AI Image Model Launch Soon: Here’s What To Expect In Terms Of Features, Quality Upgrades, And Release Details | Technology News

Google Nano Banana 2 AI Image: The first Nano Banana AI image model became a massive success, allowing millions of users to generate creative and realistic visuals using advanced AI technology. Now, anticipation is building as Nano Banana 2 promises to take image generation to the next level with improved accuracy, quality, and versatility. Some reports even suggest Google might announce the model as early as this week. Nano Banana 2 Launch: What to Expect According to early leaks and insider reports, Nano Banana 2 will go beyond traditional image generation. The upcoming model is expected to allow users to create charts, infographics, and visual data using simple text prompts. Another exciting addition could be support for high-resolution downloads and customizable aspect ratios like 9:16 and 16:9 — perfect for both portrait and landscape visuals. Add Zee News as a Preferred Source Additionally, Nano Banana 2 might introduce enhanced capabilities for generating realistic images of public figures such as world leaders or athletes, placing them in different creative settings using contextual prompts. What Else to Expect from Google Alongside Nano Banana 2, Google is also preparing to roll out Gemini 3.0, the next major update in its AI ecosystem. The tech giant has partnered with Jio to offer the Gemini AI Pro plan free of cost for 18 months to users in India — a move positioned to rival ChatGPT Go and Perplexity Pro’s offerings. Gemini’s integration is expanding further into Google Maps, where users can now ask contextual, AI-powered questions — such as locating the nearest gas station or finding restaurants matching specific cuisines and preferences. The update also aims to connect Gemini with other apps, making it more useful for daily tasks, including navigation and on-the-go assistance. With Nano Banana 2 and Gemini 3.0, Google is clearly doubling down on its AI advancements, aiming to redefine how users create, explore, and interact with digital content.

7 Prompt Engineering Tricks to Mitigate Hallucinations in LLMs

7 Prompt Engineering Tricks to Mitigate Hallucinations in LLMs Introduction Large language models (LLMs) exhibit outstanding abilities to reason over, summarize, and creatively generate text. Still, they remain susceptible to the common problem of hallucinations, which consists of generating confident-looking but false, unverifiable, or sometimes even nonsensical information. LLMs generate text based on intricate statistical and probabilistic patterns rather than relying primarily on verifying grounded truths. In some critical fields, this issue can cause major negative impacts. Robust prompt engineering, which involves the craftsmanship of elaborating well-structured prompts with instructions, constraints, and context, can be an effective strategy to mitigate hallucinations. The seven techniques listed in this article, with examples of prompt templates, illustrate how both standalone LLMs and retrieval augmented generation (RAG) systems can improve their performance and become more robust against hallucinations by simply implementing them in your user queries. 1. Encourage Abstention and “I Don’t Know” Responses LLMs typically focus on providing answers that sound confident even when they are uncertain — check this article to comprehend in detail how LLMs generate text — generating sometimes fabricated facts as a result. Explicitly allowing abstention can guide the LLM toward mitigating a sense of false confidence. Let’s look at an example prompt to do this: “You are a fact-checking assistant. If you are not confident in an answer, respond: ‘I don’t have enough information to answer that.’ If confident, give your answer with a short justification.” The above prompt would be followed by an actual question or fact check. A sample expected response would be: “I don’t have enough information to answer that.” or “Based on the available evidence, the answer is … (reasoning).” This is a good first line of defense, but nothing is stopping an LLM from disregarding those directions with some regularity. Let’s see what else we can do. 2. Structured, Chain-of-Thought Reasoning Asking a language model to apply step-by-step reasoning incentivizes inner consistency and mitigates logic gaps that could sometimes cause model hallucinations. The Chain-of-Thought Reasoning (CoT) strategy basically consists of emulating an algorithm — like list of steps or stages that the model should sequentially tackle to address the overall task at hand. Once more, the example template below is assumed to be accompanied by a problem-specific prompt of your own. “Please think through this problem step by step:1) What information is given?2) What assumptions are needed?3) What conclusion follows logically?” A sample expected response: “1) Known facts: A, B. 2) Assumptions: C. 3) Therefore, conclusion: D.” 3. Grounding with “According To” This prompt engineering trick is conceived to link the answer sought to named sources. The effect is to discourage invention-based hallucinations and stimulate fact-based reasoning. This strategy can be naturally combined with number 1 discussed earlier. “According to the World Health Organization (WHO) report from 2023, explain the main drivers of antimicrobial resistance. If the report doesn’t provide enough detail, say ‘I don’t know.’” A sample expected response: “According to the WHO (2023), the main drivers include overuse of antibiotics, poor sanitation, and unregulated drug sales. Further details are unavailable.” 4. RAG with Explicit Instruction and Context RAG grants the model access to a knowledge base or document base containing verified or current text data. Even so, the risk of hallucinations persists in RAG systems unless a well-crafted prompt instructs the system to exclusively rely on retrieved text. *[Assume two retrieved documents: X and Y]*“Using only the information in X and Y, summarize the main causes of deforestation in the Amazon basin and related infrastructure projects. If the documents don’t cover a point, say ‘insufficient data.’” A sample expected response: “According to Doc X and Doc Y, key causes include agricultural expansion and illegal logging. For infrastructure projects, insufficient data.” 5. Output Constraints and Limiting Scope Tightly controlling the format of generated outputs and their length contributes to reducing hallucinations in the form of speculative or tangential statements, like unsupported claims for causes, over-elaborated chains of reasoning, or made-up statistics, thereby preventing results that may drift away from source materials. Constraining the “degrees of freedom” over the answer space increases the odds of returning verifiable information rather than filling the gaps “no matter what.” “In no more than 100 words, summarize the role of mitochondria in human cells. If unsure, respond ‘I don’t know.’” A sample expected response: “Mitochondria generate ATP via oxidative phosphorylation, regulate calcium levels, and influence apoptosis. I lack sufficient data on additional roles.” 6. RAG-based Correction Loops: Chain-of-Verification This approach consists of combining retrieval with self-checking, enabling the model’s ability to question and double-check its own claims against external sources of evidence, primarily in RAG systems. It mainly targets hallucinations due to “over-confidence,” trying to mitigate them. “Step 1: Generate an initial answer to the question: ‘When did the Berlin Wall fall?’Step 2: Retrieve and read relevant passages from a trusted historical database.Step 3: Compare the retrieved evidence to your answer.Step 4: If discrepancies exist, correct the answer and cite the retrieved source.” A sample expected response: “Initial answer: 1989.Retrieved evidence: Historical archives confirm the Berlin Wall opened on 9 November 1989.Final verified answer: The Berlin Wall fell on 9 November 1989 when East Berlin opened border crossings.” 7. Domain-Specific Prompts, Disclaimers, and Safety Guardrails In high-stakes application domains like medicine, it is essential to specify constrained domain boundaries and require citations to sources, to reduce the risk of speculative claims that could in practice lead to negative consequences. Here is an example of doing so: “You are a certified medical information assistant. Using peer-reviewed studies or official guidelines published before 2024, explain the first-line treatment for moderate persistent asthma in adults. If you cannot cite such a guideline, respond: ‘I cannot provide a recommendation; consult a medical professional.’” A sample expected response: “According to the Global Initiative for Asthma (GINA) 2023 guideline, first-line therapy for moderate persistent asthma is a low-dose inhaled corticosteroid with a long-acting β₂-agonist such as budesonide/formoterol. For patient-specific adjustments, consult a clinician.” Wrapping Up Below is a summary the 7 strategies

10 Python One-Liners for Calculating Model Feature Importance

10 Python One-Liners for Calculating Model Feature ImportanceImage by Editor Understanding machine learning models is a vital aspect of building trustworthy AI systems. The understandability of such models rests on two basic properties: explainability and interpretability. The former refers to how well we can describe a model’s “innards” (i.e. how it operates and looks internally), while the latter concerns how easily humans can understand the captured relationships between input features and predicted outputs. As we can see, the difference between them is subtle, but there is a powerful bridge connecting both: feature importance. This article unveils 10 simple but effective Python one-liners to calculate model feature importance from different perspectives — helping you understand not only how your machine learning model behaves, but also why it made the prediction(s) it did. 1. Built-in Feature Importance in Decision Tree-based Models Tree-based models like random forests and XGBoost ensembles allow you to easily obtain a list of feature-importance weights using an attribute like: importances = model.feature_importances_ importances = model.feature_importances_ Note that model should contain a trained model a priori. The result is an array containing the importance of features, but if you want a more self-explanatory version, this code enhances the previous one-liner by incorporating the feature names for a dataset like iris, all in one line. print(“Feature importances:”, list(zip(iris.feature_names, model.feature_importances_))) print(“Feature importances:”, list(zip(iris.feature_names, model.feature_importances_))) 2. Coefficients in Linear Models Simpler linear models like linear regression and logistic regression also expose feature weights via learned coefficients. This is a way to obtain the first of them directly and neatly (remove the positional index to obtain all weights): importances = abs(model.coef_[0]) importances = abs(model.coef_[0]) 3. Sorting Features by Importance Similar to the enhanced version of number 1 above, this useful one-liner can be used to rank features by their importance values in descending order: an excellent glimpse of which features are the strongest or most influential contributors to model predictions. sorted_features = sorted(zip(features, importances), key=lambda x: x[1], reverse=True) sorted_features = sorted(zip(features, importances), key=lambda x: x[1], reverse=True) 4. Model-Agnostic Permutation Importance Permutation importance is an additional approach to measure a feature’s importance — namely, by shuffling its values and analyzing how a metric used to measure the model’s performance (e.g. accuracy or error) decreases. Accordingly, this model-agnostic one-liner from scikit-learn is used to measure performance drops as a result of randomly shuffling a feature’s values. from sklearn.inspection import permutation_importance result = permutation_importance(model, X, y).importances_mean from sklearn.inspection import permutation_importance result = permutation_importance(model, X, y).importances_mean 5. Mean Loss of Accuracy in Cross-Validation Permutations This is an efficient one-liner to test permutations in the context of cross-validation processes — analyzing how shuffling each feature impacts model performance across K folds. import numpy as np from sklearn.model_selection import cross_val_score importances = [(cross_val_score(model, X.assign(**{f: np.random.permutation(X[f])}), y).mean()) for f in X.columns] import numpy as np from sklearn.model_selection import cross_val_score importances = [(cross_val_score(model, X.assign(**{f: np.random.permutation(X[f])}), y).mean()) for f in X.columns] 6. Permutation Importance Visualizations with Eli5 Eli5 — an abbreviated form of “Explain like I’m 5 (years old)” — is, in the context of Python machine learning, a library for crystal-clear explainability. It provides a mildly visually interactive HTML view of feature importances, making it particularly handy for notebooks and suitable for trained linear or tree models alike. import eli5 eli5.show_weights(model, feature_names=features) import eli5 eli5.show_weights(model, feature_names=features) 7. Global SHAP Feature Importance SHAP is a popular and powerful library to get deeper into explaining model feature importance. It can be used to calculate mean absolute SHAP values (feature-importance indicators in SHAP) for each feature — all under a model-agnostic, theoretically grounded measurement approach. import numpy as np import shap shap_values = shap.TreeExplainer(model).shap_values(X) importances = np.abs(shap_values).mean(0) import numpy as np import shap shap_values = shap.TreeExplainer(model).shap_values(X) importances = np.abs(shap_values).mean(0) 8. Summary Plot of SHAP Values Unlike global SHAP feature importances, the summary plot provides not only the global importance of features in a model, but also their directions, visually helping understand how feature values push predictions upward or downward. shap.summary_plot(shap_values, X) shap.summary_plot(shap_values, X) Let’s look at a visual example of result obtained: 9. Single-Prediction Explanations with SHAP One particularly attractive aspect of SHAP is that it helps explain not only the overall model behavior and feature importances, but also how features specifically influence a single prediction. In other words, we can reveal or decompose an individual prediction, explaining how and why the model yielded that specific output. shap.force_plot(shap.TreeExplainer(model).expected_value, shap_values[0], X.iloc[0]) shap.force_plot(shap.TreeExplainer(model).expected_value, shap_values[0], X.iloc[0]) 10. Model-Agnostic Feature Importance with LIME LIME is an alternative library to SHAP that generates local surrogate explanations. Rather than using one or the other, these two libraries complement each other well, helping better approximate feature importance around individual predictions. This example does so for a previously trained logistic regression model. from lime.lime_tabular import LimeTabularExplainer exp = LimeTabularExplainer(X.values, feature_names=features).explain_instance(X.iloc[0], model.predict_proba) from lime.lime_tabular import LimeTabularExplainer exp = LimeTabularExplainer(X.values, feature_names=features).explain_instance(X.iloc[0], model.predict_proba) Wrapping Up This article unveiled 10 effective Python one-liners to help better understand, explain, and interpret machine learning models with a focus on feature importance. Comprehending how your model works from the inside is no longer a mysterious black box with the aid of these tools.