In this article, you will learn how reranking improves the relevance of results in retrieval-augmented generation (RAG) systems by going beyond what retrievers alone can achieve.

Topics we will cover include:

- How rerankers refine retriever outputs to deliver better answers

- Five top reranker models to test in 2026

- Final thoughts on choosing the right reranker for your system

Let’s get started.

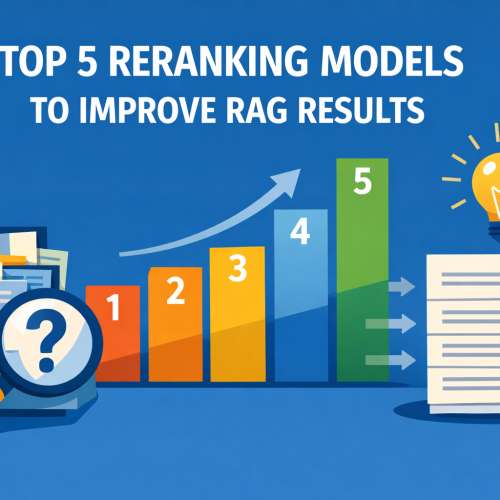

Top 5 Reranking Models to Improve RAG Results

Image by Editor

Introduction

If you have worked with retrieval-augmented generation (RAG) systems, you have probably seen this problem. Your retriever brings back “relevant” chunks, but many of them are not actually useful. The final answer ends up noisy, incomplete, or incorrect. This usually happens because the retriever is optimized for speed and recall, not precision.

That is where reranking comes in.

Reranking is the second step in a RAG pipeline. First, your retriever fetches a set of candidate chunks. Then, a reranker evaluates the query and each candidate and reorders them based on deeper relevance.

In simple terms:

- Retriever → gets possible matches

- Reranker → picks the best matches

This small step often makes a big difference. You get fewer irrelevant chunks in your prompt, which leads to better answers from your LLM. Benchmarks like MTEB, BEIR, and MIRACL are commonly used to evaluate these models, and most modern RAG systems rely on rerankers for production-quality results. There is no single best reranker for every use case. The right choice depends on your data, latency, cost constraints, and context length requirements. If you are starting fresh in 2026, these are the five models to test first.

1. Qwen3-Reranker-4B

If I had to pick one open reranker to test first, it would be Qwen3-Reranker-4B. The model is open-sourced under Apache 2.0, supports 100+ languages, and has a 32k context length. It shows very strong published reranking results (69.76 on MTEB-R, 75.94 on CMTEB-R, 72.74 on MMTEB-R, 69.97 on MLDR, and 81.20 on MTEB-Code). It performs well across different types of data, including multiple languages, long documents, and code.

2. NVIDIA nv-rerankqa-mistral-4b-v3

For question-answering RAG over text passages, nv-rerankqa-mistral-4b-v3 is a solid, benchmark-backed choice. It delivers high ranking accuracy across evaluated datasets, with an average Recall@5 of 75.45% when paired with NV-EmbedQA-E5-v5 across NQ, HotpotQA, FiQA, and TechQA. It is commercially ready. The main limitation is context size (512 tokens per pair), so it works best with clean chunking.

3. Cohere rerank-v4.0-pro

For a managed, enterprise-friendly option, rerank-v4.0-pro is designed as a quality-focused reranker with 32k context, multilingual support across 100+ languages, and support for semi-structured JSON documents. It is suitable for production data such as tickets, CRM records, tables, or metadata-rich objects.

4. jina-reranker-v3

Most rerankers score each document independently. jina-reranker-v3 uses listwise reranking, processing up to 64 documents together in a 131k-token context window, achieving 61.94 nDCG@10 on BEIR. This approach is useful for long-context RAG, multilingual search, and retrieval tasks where relative ordering matters. It is published under CC BY-NC 4.0.

5. BAAI bge-reranker-v2-m3

Not every strong reranker needs to be new. bge-reranker-v2-m3 is lightweight, multilingual, easy to deploy, and fast at inference. It is a practical baseline. If a newer model does not significantly outperform BGE, the added cost or latency may not be justified.

Final Thoughts

Reranking is a simple yet powerful way to improve a RAG system. A good retriever gets you close. A good reranker gets you to the right answer. In 2026, adding a reranker is essential. Here is a shortlist of recommendations:

| Feature | Description |

|---|---|

| Best open model | Qwen3-Reranker-4B |

| Best for QA pipelines | NVIDIA nv-rerankqa-mistral-4b-v3 |

| Best managed option | Cohere rerank-v4.0-pro |

| Best for long context | jina-reranker-v3 |

| Best baseline | BGE-reranker-v2-m3 |

This selection provides a strong starting point. Your specific use case and system constraints should guide the final choice.

About Kanwal Mehreen

Kanwal Mehreen is an aspiring Software Developer with a keen interest in data science and applications of AI in medicine. Kanwal was selected as the Google Generation Scholar 2022 for the APAC region. Kanwal loves to share technical knowledge by writing articles on trending topics, and is passionate about improving the representation of women in tech industry.