In this article, you will learn how machine learning is evolving in 2026 from prediction-focused systems into deeply integrated, action-oriented systems that drive real-world workflows.

Topics we will cover include:

- Why agentic AI and generative AI are reshaping how machine learning systems are designed and deployed.

- How specialized models, edge deployment, and operational maturity are changing what effective machine learning looks like in practice.

- Why human collaboration, explainability, and responsible design are becoming essential as machine learning moves deeper into decision-making.

Let’s not waste any more time.

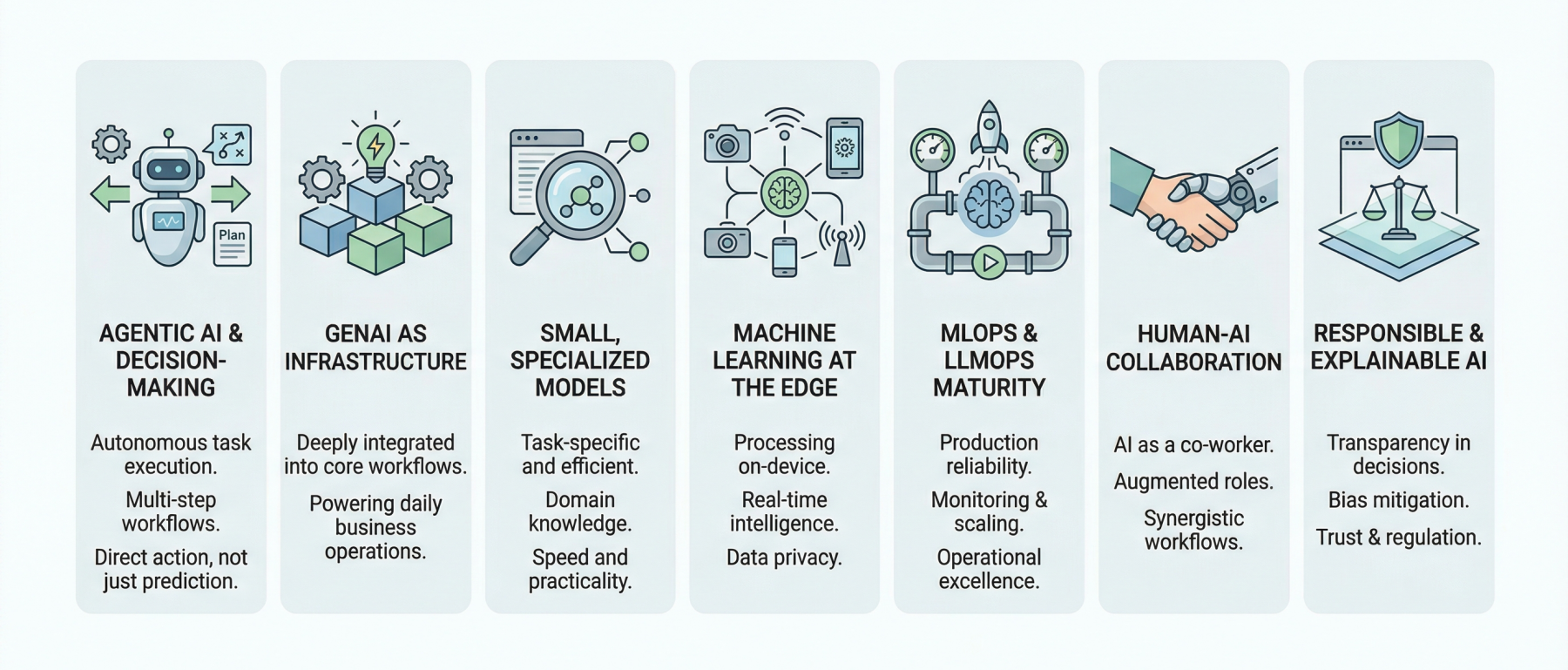

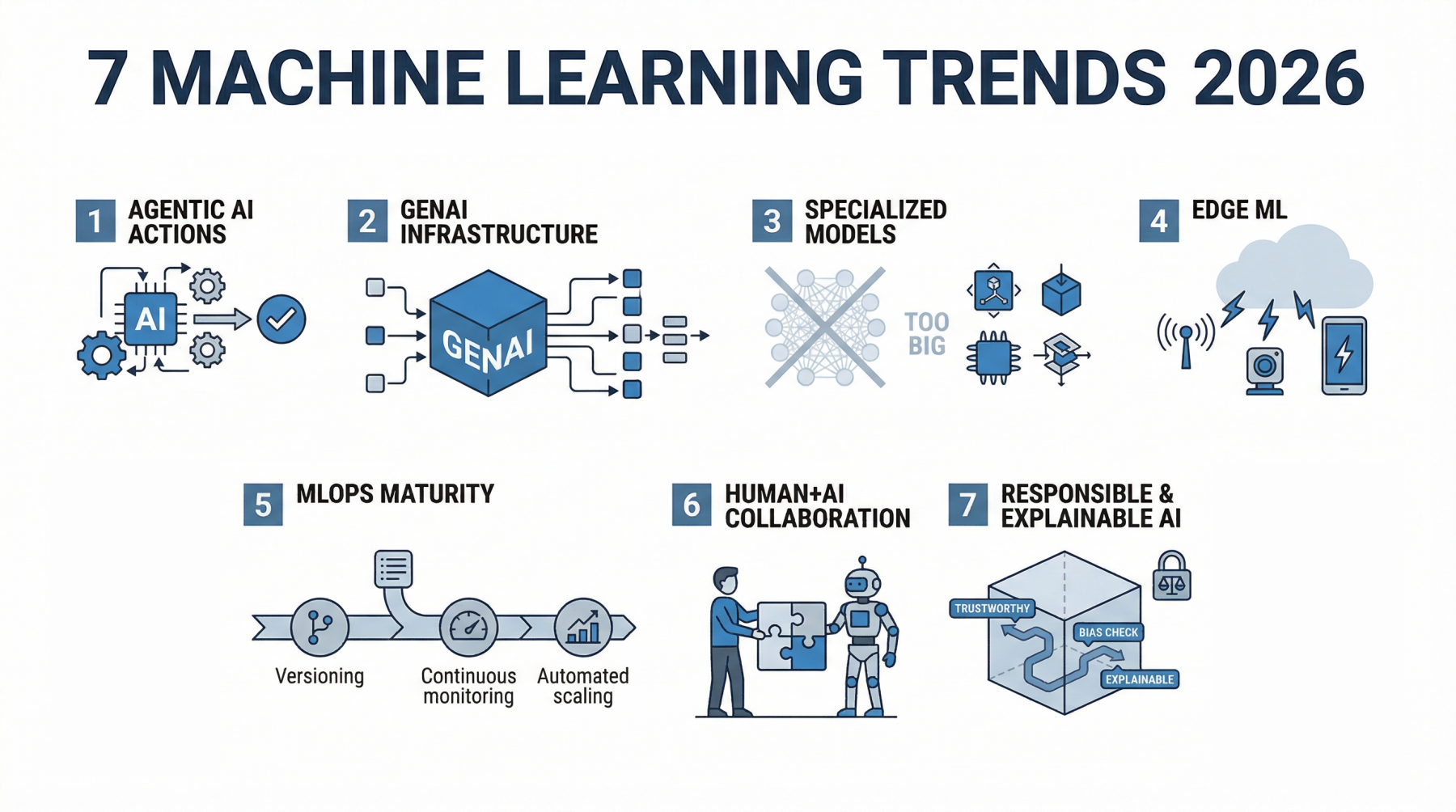

7 Machine Learning Trends to Watch in 2026

Image by Editor

The Shifting Trend Landscape

A couple of years ago, most machine learning systems sat quietly behind dashboards. You gave them data, they returned predictions, and a human still had to decide what to do next. That boundary is fading. In 2026, machine learning is no longer just something you query. It is something that acts, often without waiting for permission.

The shift did not happen overnight. In 2023 and 2024, the focus was on capability. Bigger models, better benchmarks, and more impressive demos. Teams rushed to plug AI into products just to prove they could. What followed was a reality check. Many of those early implementations struggled in production. They were expensive, hard to maintain, and often disconnected from real workflows.

Now the focus has changed. Machine learning is being designed around outcomes, not just outputs. Systems are expected to complete tasks, not just assist with them. A customer support model does not just suggest replies; it resolves tickets. A data pipeline does not just flag anomalies; it triggers actions. The difference is subtle, but it changes how everything is built.

This shift is also reflected in how much money is moving into the space. Global AI spending is projected to reach $2.02 trillion by 2026. At the same time, the machine learning market is expected to grow toward $1.88 trillion by 2035. These are not speculative investments anymore. They reflect systems that are already being embedded into core business operations.

What stands out in 2026 is not just how powerful these models are, but how deeply they are integrated. Machine learning is no longer sitting on the side as an experimental feature. It is part of the workflow itself, shaping decisions, automating processes, and, in many cases, running them end to end.

Here are the 7 trends actually shaping how machine learning is being built and used in 2026.

Trend 1: Agentic AI Moves From Assistants to Decision-Makers

For a long time, machine learning systems behaved like quiet assistants. You gave them input, they returned an output, and the responsibility of acting on that output stayed with a human or another system. That model is breaking down.

Agentic AI changes the role entirely. Instead of waiting for instructions, these systems can plan, make decisions, and carry out tasks from start to finish.

The difference becomes clear when you compare it to traditional machine learning. A typical model might predict customer churn or classify support tickets. Useful, but limited. An agentic system takes it further. It identifies a high-risk customer, decides on the best retention strategy, drafts a personalized message, and triggers the outreach. The output is no longer just a prediction. It is an action.

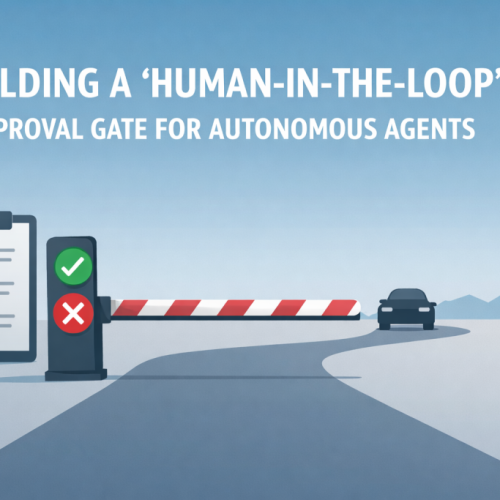

What makes this possible is the ability to handle multi-step workflows. Agentic systems can break down a goal into smaller tasks, execute them in sequence, and adjust along the way. They can pull data from different sources, call APIs, generate responses, and refine decisions based on feedback. This is closer to how a human approaches a problem than how a traditional model operates.

You can already see this shift across industries. In customer support, AI agents are resolving entire tickets without escalation. In operations, they are managing inventory decisions by combining demand forecasts with supply constraints. In healthcare, they assist with tasks like summarizing patient records and recommending next steps, reducing the time clinicians spend on routine work.

The numbers reflect how quickly this is moving. The AI agents market is expected to reach $93.2 billion by 2032. At the same time, reports suggest that up to 40% of enterprise applications may include AI agents by 2026. That level of adoption points to something more than a trend. It signals a shift in how software itself is designed.

This is arguably the most important change in machine learning right now. Once systems can act on their own, everything else starts to evolve around that capability. Model design, infrastructure, and even user interfaces begin to revolve around autonomy rather than assistance.

Trend 2: Generative AI Becomes Infrastructure, Not a Feature

There was a time when adding generative AI to a product felt like a headline. A chatbot here, a content generator there. It was visible, sometimes impressive, but often isolated from the rest of the system.

That phase is ending. In 2026, generative AI is no longer treated as an add-on. It is becoming part of the underlying infrastructure that powers everyday workflows.

You can see this shift in how teams are using it. In software development, it is embedded directly into coding environments, helping write, review, and even refactor code in real time. Similarly, in business operations, it generates reports, summarizes meetings, and pulls insights from large datasets without requiring manual analysis.

What is different now is not just capability, but placement. Generative models are no longer sitting on the edges of applications. They are integrated into the core workflow.

This shift has also forced a move from experimentation to production. Early adopters spent the last two years testing what generative AI could do. Now the focus is on reliability, cost, and consistency. Models are being fine-tuned, combined with traditional machine learning systems, and connected to structured data sources. The result is a hybrid approach where generative AI handles unstructured tasks like text and reasoning, while traditional models handle prediction and optimization.

The impact is already measurable. Companies are reporting up to a 30% reduction in workload after integrating generative AI into their workflows. That kind of improvement is not coming from isolated features. It comes from deep integration.

At this point, the conversation has shifted. Organizations are no longer asking whether they should adopt generative AI. The more relevant question is where it is still missing, and which parts of the workflow are still operating without it.

Trend 3: Smaller, Specialized Models Start Winning

For a while, progress in machine learning was easy to measure. Bigger models meant better performance. More parameters, more data, and better results. That logic pushed the industry toward massive systems that required serious compute, large budgets, and complex infrastructure.

In 2026, smaller and more specialized models are gaining ground, not because they are more impressive, but because they are more practical. These models are designed for specific tasks, trained on focused datasets, and optimized for real-world use rather than benchmark performance.

Small language models (SLMs) are a good example. Instead of trying to handle every possible task, they are built to perform extremely well within a narrow domain. That could be legal document analysis, customer support conversations, or internal knowledge retrieval. In these cases, a smaller model that understands the context deeply often outperforms a larger, more general one.

The advantages are hard to ignore. Smaller models are cheaper to run, faster to respond, and easier to deploy. They can run on local servers or even directly within applications without relying heavily on external infrastructure. This reduces latency and gives teams more control over performance and data privacy.

There is also a shift in how success is measured. Instead of asking how powerful a model is in general, teams are asking how well it performs in a specific context. A model that delivers consistent, accurate results for a single business-critical task is often more valuable than a large model that performs reasonably well across many tasks but lacks precision where it matters.

This is where the focus on efficiency comes in. Companies are starting to prioritize models that deliver strong results with lower operational costs. Training and running large models is expensive, and not every use case justifies that investment. Smaller models offer a better balance between performance and cost, especially when deployed at scale.

The underlying shift is simple. The industry is moving away from raw scale as the primary goal and toward usability. In practice, that means building models that fit the problem, not models that try to cover everything.

At this point, model size is no longer a flex. Return on investment is what matters, and specialized models are making a strong case.

Trend 4: Machine Learning Moves to the Edge (IoT + Real-Time Intelligence)

For years, most machine learning systems lived in the cloud. Data was collected, sent to centralized servers, processed, and then returned as predictions. That model worked, but it came with trade-offs: latency, bandwidth costs, and growing concerns around data privacy.

In 2026, that setup is starting to shift. More models are being pushed closer to where data is actually generated.

This is what edge machine learning looks like in practice. Instead of sending video feeds, sensor data, or user inputs to the cloud, the model runs directly on the device or near it. A security camera can detect unusual activity in real time. A mobile app can process voice or image data instantly. Industrial machines can monitor performance and react without waiting for a round trip to a remote server.

The difference between cloud machine learning and edge machine learning comes down to speed and control. Cloud systems are powerful and scalable, but they introduce delays. Edge systems reduce that delay to near zero because the computation happens locally. For use cases that depend on immediate responses, that difference matters.

Real-time inference is where this becomes critical. In areas like autonomous systems, healthcare monitoring, and smart infrastructure, even small delays can affect outcomes. Running models at the edge ensures decisions are made as events happen, not seconds later.

There is also a growing push around privacy. Sending large volumes of raw data to the cloud raises concerns, especially when that data includes sensitive information. Edge machine learning allows much of that processing to happen locally, with only necessary insights being shared. This reduces exposure and makes compliance easier for companies operating under strict data regulations.

The scale of connected devices is another factor driving this trend. The number of IoT devices is expected to reach 39 billion by 2030. With that many devices generating continuous streams of data, sending everything to the cloud is no longer efficient or practical.

What is happening here is not a complete shift away from the cloud, but a redistribution of computation. Some tasks will always require centralized processing, but an increasing number of decisions are being made at the edge.

Trend 5: MLOps and LLMOps Become Mandatory

It has never been easier to build a machine learning model. With open-source tools, pre-trained models, and APIs, a working prototype can be up and running in hours. The hard part begins after that.

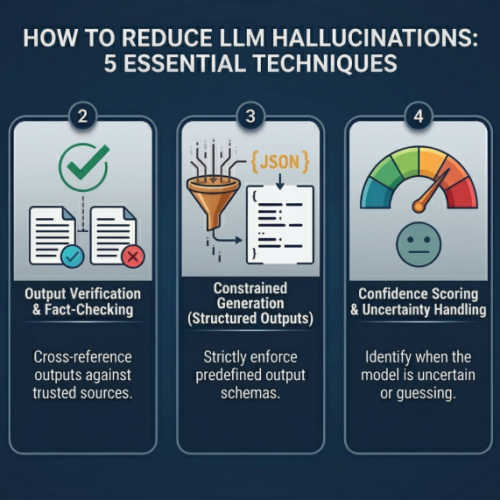

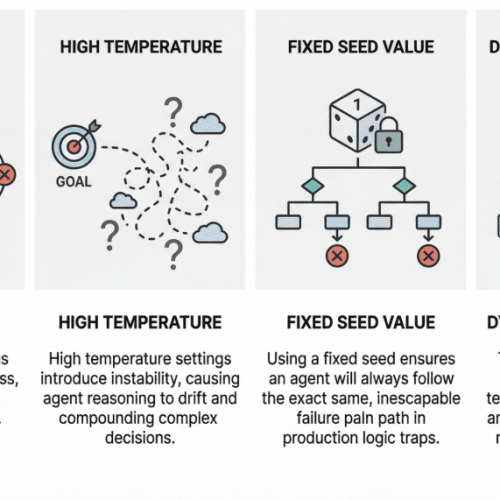

Running these systems reliably in production is where most teams struggle. This is where MLOps comes in. It focuses on everything that happens after a model is built: versioning, monitoring, deployment, scaling, and continuous updates. As models become more complex, especially with the rise of generative AI, this has expanded into LLMOps and even AgentOps. Each layer introduces new challenges. Prompt management, response evaluation, tool integration, and multi-step execution all need to be handled carefully.

The shift from experimentation to production has exposed gaps that were easy to ignore before. A model that performs well in testing can behave unpredictably in real-world conditions. Data changes, user behavior evolves, and small errors can scale quickly. Without proper monitoring, these issues often go unnoticed until they affect users.

Teams are now treating machine learning systems the same way they treat critical software infrastructure. That means tracking performance over time, managing different versions of models, and setting up pipelines that allow updates without breaking existing systems. It also means building safeguards: logging outputs, detecting anomalies, and creating fallback mechanisms when things go wrong.

Scaling is another pressure point. A model that works for a few users might fail under heavy demand. Latency increases, costs rise, and performance becomes inconsistent. MLOps practices help manage this by optimizing how models are served and ensuring resources are used efficiently.

What is clear in 2026 is that machine learning is no longer a side project. It is part of the core system. When it fails, the product fails with it. This is why operational maturity is becoming a competitive advantage. Teams that can deploy, monitor, and improve models consistently will move faster and build more reliable systems. Those that cannot will spend more time fixing issues than delivering value.

At this point, knowing how to build a model is not enough. The real differentiator is knowing how to run it at scale.

Trend 6: Human + AI Collaboration Becomes the Default

The early narrative around AI focused heavily on replacement: jobs lost, roles automated, and entire functions taken over. What is becoming clearer in 2026 is something more practical. Most of the value is coming from collaboration, not substitution.

AI is starting to feel less like a tool and more like a co-worker. The difference shows up in how work gets done. Instead of using software to execute fixed tasks, people are working alongside systems that can suggest, generate, review, and refine outputs in real time. The human sets direction, provides context, and makes final decisions. The AI handles the heavy lifting in between.

In hospitals, this might look like a system that summarizes patient histories, highlights key risks, and suggests possible next steps, allowing clinicians to focus on judgment and patient interaction. In marketing, teams are using AI to generate campaign ideas, test variations, and analyze performance faster than manual processes would allow. In engineering, developers are writing, reviewing, and debugging code with AI systems that can keep up with the pace of development.

What stands out is not just speed, but how roles are evolving. Tasks that used to take hours are now completed in minutes, which changes how time is spent. Instead of focusing on execution, people are spending more time on strategy, validation, and creative problem-solving.

There is already a measurable impact. AI-assisted workflows are improving productivity across industries, with many organizations reporting significant efficiency gains as these systems become part of daily operations. These gains are not coming from removing humans from the loop, but from changing how they work within it.

This shift also introduces a new kind of skill. Knowing how to ask the right questions, guide outputs, and evaluate results becomes just as important as technical expertise. People who can effectively collaborate with AI systems are able to move faster and produce better results.

The idea of competing with AI is slowly losing relevance. The real advantage now comes from learning how to work with it and understanding where human judgment still matters most.

Trend 7: Responsible and Explainable AI Takes Center Stage

As machine learning systems become more embedded in decision-making, one question keeps coming up: can we trust what these systems are doing?

For a long time, many models operated like black boxes. They produced accurate results, but the reasoning behind those results was difficult to trace. That was acceptable when the stakes were low. It becomes a problem when those same systems are used in areas like finance, healthcare, hiring, or law enforcement.

This is where explainable AI, often referred to as XAI, starts to matter. It focuses on making model decisions more transparent. Instead of just giving an output, the system can show which inputs influenced that decision and how strongly. This makes it easier for teams to validate results, catch errors, and build confidence in how the system behaves.

At the same time, regulation is starting to catch up with adoption. Governments and regulatory bodies are introducing frameworks that require companies to be more accountable for how their AI systems are built and used. This includes how data is collected, how models are trained, and how decisions are made. Compliance is no longer just a legal concern; it is becoming part of the product itself.

Bias and fairness are also getting more attention. Machine learning systems learn from data, and if that data reflects existing biases, the model can amplify them. In practical terms, this can lead to unfair outcomes in areas like loan approvals, hiring decisions, or risk assessments. Addressing this requires more than technical fixes. It involves careful data selection, continuous monitoring, and clear accountability for outcomes.

Companies are starting to take this seriously, not just because of regulation, but because of user expectations. People want to understand how decisions that affect them are made. If a system denies a request or flags a risk, there needs to be a clear explanation behind it.

This growing focus on responsible AI is visible across both industry and policy. Ethical considerations are no longer treated as side discussions. They are becoming part of how systems are designed from the start.

The reason is simple. Without trust, adoption slows down. It does not matter how powerful a system is if people are hesitant to rely on it. In 2026, building accurate models is only part of the job. Building systems that people can understand and trust is just as important.

Wrapping Up

In 2026, machine learning is no longer just a set of tools or experimental features. It has moved into the background of workflows, quietly powering decisions, automating tasks, and collaborating with humans. The emphasis is shifting from building bigger or flashier models to creating systems that can act autonomously, integrate seamlessly with existing processes, and deliver real-world impact.

The trends we have explored — agentic AI, generative AI as infrastructure, specialized models, edge computing, operational excellence through MLOps, human-AI collaboration, and responsible AI — are not isolated developments. Together, they represent a new standard: machine learning systems that work, reliably and meaningfully, at the heart of business and daily life.

Machine learning in 2026 is less about building smarter models and more about building systems that actually do the work.